The Shift to the 'Inference Era' and the Revaluation of the CPU: A Technical View and What Investors Should Watch

For the past several years, AI infrastructure investment has been almost entirely about GPUs.

Training large language models requires huge volumes of matrix math executed at high speed. Because of this, Nvidia GPUs, HBM, advanced packaging, and data center power have been the central themes of AI investment.

But something a little different is starting to happen.

The use of AI is shifting from “training” — building the model — to “inference,” where the model actually answers user questions, writes code, and performs work.

The most important piece of this shift is AI agents.

An AI agent is not just a chatbot that returns a single response. It breaks tasks into pieces, calls external tools, branches on conditions, and runs inference many times as needed.

In this kind of work, not only GPUs but also CPUs, memory, storage, networking, and the system that orchestrates all of them become more important.

The recent rise in CPU prices is, in my view, a very clear signal of this change.

Recent moves

First, Meta signing a large deal to use Amazon’s Graviton CPUs is a strong hint about how important CPUs have become.

According to Amazon, Meta signed a multi-year deal worth several billion dollars to use AWS Graviton CPUs for its AI infrastructure. AWS explains that this contract supports Meta’s AI agent workloads.

This shows that the value of AI infrastructure is no longer determined by GPUs alone.

Of course, GPUs remain at the center for large model training and large-scale parallel computation. But once AI agents start running inside actual business workflows, the nature of the workload changes a lot.

Receiving many requests, managing state, calling external APIs, moving data around, and feeding inference results into the next step.

In this kind of processing, control and orchestration by the CPU matter, not only the GPU.

Intel’s strong earnings show the CPU is being reevaluated

This trend also shows up in Intel’s results.

Intel reported Q1 2026 revenue of $13.6 billion, up 7% year-over-year. The Data Center and AI segment in particular came in at $5.1 billion, up 22% year-over-year. Intel CEO Lip-Bu Tan said that as the next wave of AI moves from foundation models to inference and agentic AI, demand for CPUs and advanced packaging is rising sharply.

Reuters also reports that Intel’s stock surge was driven by strong demand for AI data center CPUs. Intel’s CFO said supply was tight in Q1, and the company even shipped inventory it had previously set aside to meet customer demand.

The important point here is that Intel’s reevaluation is not just a recovery in PC demand.

It looks more like investors are changing their view because the configuration of AI data centers is changing, and CPUs are starting to run short again.

CPU price increases are evidence that the AI infrastructure bottleneck is widening

Tom’s Hardware reports that as AI workloads expand from training to inference, and especially to agentic AI, demand for server CPUs is rising sharply.

The same report says that traditional AI servers typically had a ratio of 1 CPU for every 4 to 8 GPUs, while inference and agentic AI workloads may push the CPU-to-GPU ratio closer to 1:1.

It also says that server CPU prices have risen by 10% to 20% since March 2026, and consumer CPU prices have risen by 5% to 10%.

What this tells us is that the constraint on AI infrastructure is no longer GPUs alone.

In addition to HBM and DRAM shortages, advanced packaging constraints, power, cooling, and land, CPUs are now starting to see price increases too.

In other words, AI infrastructure investment is moving from the simple question of “how many GPUs can you secure?” to a competition over how to procure and optimize CPUs, GPUs, memory, networking, storage, and power as a whole.

The Google–Intel partnership shows the importance of CPUs and IPUs

The Google–Intel partnership also supports this trend.

In April 2026, Intel announced an expanded collaboration with Google. Google Cloud will continue to use Intel Xeon processors for its AI, inference, and general-purpose workloads, and the two will also expand joint development of custom ASIC-based IPUs — Infrastructure Processing Units. Intel itself describes this collaboration by saying that CPUs and IPUs play a central role in modern heterogeneous AI systems.

What is worth noting here is that Google is not simply buying Intel CPUs.

Google appears to be combining CPUs and IPUs to design a system that runs the AI-era data center more efficiently.

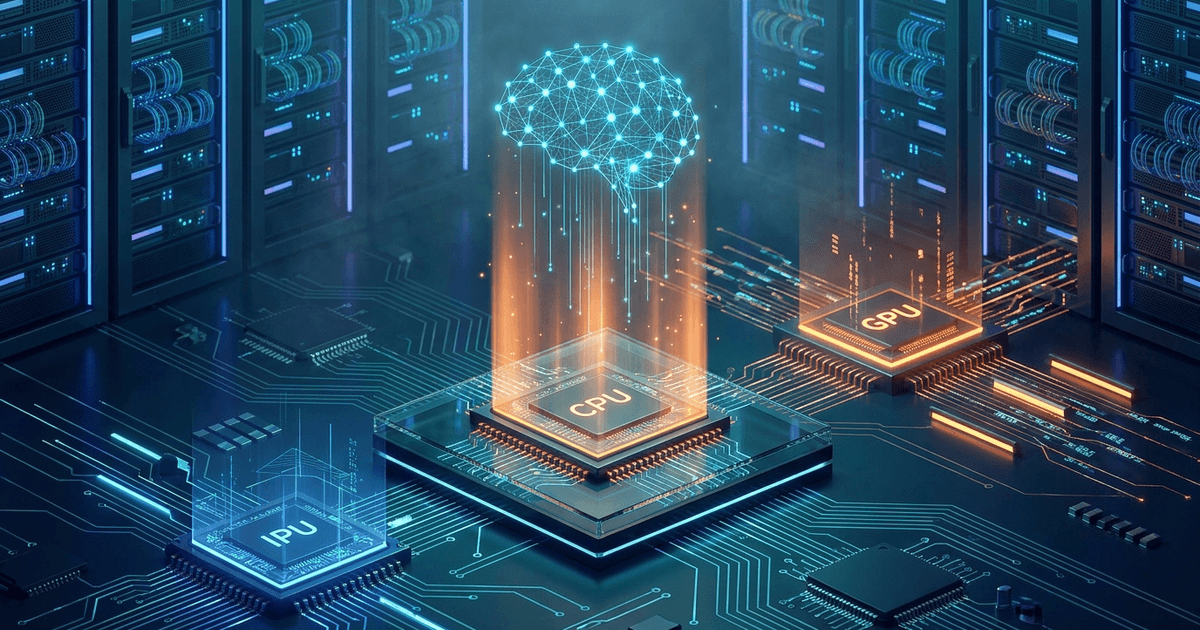

Let me touch on the roles of CPU, GPU, and IPU here.

The CPU is the “conductor” that is good at complex branching and ordered processing. When an AI agent breaks a task into pieces, calls external tools, and decides what to do next, the CPU plays an important role.

The GPU is the “engine” that runs large amounts of matrix math in parallel. For training and running large models, the GPU continues to play the central role.

The IPU, on the other hand, is not so much about accelerating computation itself. It takes over infrastructure work like networking, storage, security, and virtualization.

In other words, the IPU is not the same kind of “cook” as the CPU or GPU.

If you compare it to a restaurant, the IPU is more like the operations staff who handle delivery, inventory, accounting, security, and keeping the kitchen organized.

The cooks themselves — the CPU and GPU — make the food. But if the cooks also have to take care of all the operational chores, both the speed and the quality of the food drop.

The same thing is happening in data centers.

In large cloud environments, a huge amount of work happens that is separate from the user’s actual computation: server-to-server communication, storage reads and writes, encryption, authentication, virtual machine management, and so on. If all of this is handled by the CPU, part of the expensive CPU resource gets consumed by “infrastructure management” instead of “the actual computation.”

This burden is often called the “data center tax.”

In Intel’s description of the IPU E2000, the IPU is described as something that lets cloud providers and telcos reduce CPU overhead and free up CPU performance for its actual workloads. The IPU E2000, co-designed with Google, is built so that network processing is offloaded to the IPU and the Xeon CPUs can focus on customer workloads.

Seen in this context, the reason Google is jointly developing IPUs with Intel becomes quite clear.

In the inference era, especially the era of AI agents, simply lining up large numbers of GPUs is not enough. An AI agent does not finish in a single big computation. It generates many small requests, talks to external tools, reads and writes data, checks permissions, and feeds the results into the next inference.

In this kind of processing, the efficiency of the entire infrastructure — including the CPU, network, storage, and security — matters, not just the raw compute of the GPU.

The IPU plays the role of freeing up the CPU within this picture.

Instead of the CPU getting tied up in network and storage management, it can focus on controlling the AI agent and managing tasks. The IPU is becoming an important supporting line that makes this possible.

For Google, this is a way to raise the efficiency of the entire cloud. Offloading infrastructure work to the IPU makes it easier to improve the performance of virtual machines and inference environments. With less CPU resource, more customer workloads can potentially be handled.

For Intel, it means positioning Xeon not as a standalone CPU part, but as the foundation of an AI infrastructure combined with the IPU. You could say Intel is offering a different axis to the GPU-centric AI infrastructure debate — a balanced system that includes the CPU and the IPU.

Why is Google partnering with Intel now?

Let me think about why Google is partnering closely with Intel.

Google has developed its own TPU and also has Axion, an Arm-based custom CPU. It is one of the most capable companies in the world at designing AI infrastructure in-house.

Even so, the reason Google is partnering with Intel is, in my view, not simple CPU procurement, but continuity with existing infrastructure, joint development of custom IPUs, and system integration.

First, Google Cloud’s infrastructure has used Intel Xeon for many years.

Replacing the entire existing Xeon-based environment all at once would carry a lot of technical and operational risk.

In AI infrastructure, not only performance but also stability, compatibility, and operational track record matter.

Because of this, a design that keeps the existing Xeon environment in place while carving out only the infrastructure processing using IPUs is quite practical.

Second, the Google–Intel IPU collaboration is not new.

Google and Intel started collaborating on an IPU called Mount Evans back in 2021. The IPU offloads infrastructure processing from the CPU and redirects server CPU cycles to customer workloads.

So this latest deal is not a sudden change of direction.

It sits on the same line as the infrastructure acceleration work that Google has been pushing for years.

Third, Intel has accumulated experience integrating CPUs, IPUs, networking, and advanced packaging at the system level.

Google has the design capability, but to mass-produce infrastructure parts that run reliably across a whole data center, integrate them with the existing Xeon environment, and harden them for real cloud operations, it makes sense to use Intel’s experience.

What is important here is that the right way to read this is not “Google depends on Intel,” but rather “Google is spreading its partners across different use cases.”

Google has its own TPU, and it is also reportedly in talks with Marvell about an inference chip. At the same time, with Intel, it is jointly developing the parts close to the foundation of the infrastructure, around Xeon and the IPU.

This is a survival strategy that fits a hyperscaler.

Rather than handing everything to a single vendor, it spreads GPUs, TPUs, CPUs, IPUs, ASICs, and networking and memory peripherals across use cases, and ultimately optimizes its own data centers as a whole.

In this sense, the Google–Intel partnership is not simply about Intel CPUs making a comeback.

I think it shows that AI infrastructure in the inference era is moving from a competition over the performance of individual chips to a competition over the optimization of the entire system architecture.

The rise of SiFive and RISC-V

Another company worth noting is SiFive.

According to Reuters, SiFive — a chip design company based on RISC-V — raised $400 million from Nvidia, Atreides, Apollo, Point72, T. Rowe Price, and others, putting its valuation at $3.65 billion. SiFive plans to push forward with data center CPU development.

This shows that the CPU market is no longer just about Intel and AMD.

RISC-V is an open-standard instruction set, and is said to offer more freedom for customization than Arm or x86. In AI data centers, cloud operators and chip companies have a stronger desire than ever to optimize chips for their own workloads.

In this trend, the value of a neutral CPU IP company like SiFive seems to be rising.

What is especially interesting is that Nvidia is among SiFive’s investors. Nvidia is often seen as a GPU company, but in reality it is trying to design the entire AI factory — not only GPUs, but also CPUs, networks, racks, and software.

Seen in this context, it is natural for Nvidia to take an interest in RISC-V CPU IP.

- What kind of CPU should sit next to the GPU?

- How should GPUs, CPUs, memory, and the network be connected?

- Which parts should the company own, and which should be left to partners?

The competitive axis of AI infrastructure has already moved from individual chips to the system as a whole.

Google–Marvell moves also point to a system-level competition in the inference era

Reports that Google is in talks with Marvell on AI chip development fit the same trend.

Reuters, citing The Information, reports that Google is in talks with Marvell about two kinds of AI chips. One is a memory processing unit to combine with Google’s TPU, and the other is a new TPU designed to run AI models more efficiently.

This shows that the competition in the inference era is not a simple “GPU vs. TPU” matchup.

To run AI models efficiently, you need optimization that covers not just compute, but also proximity of memory, data movement, networking, and the software stack.

Google has the TPU, and even so, reports suggest it may partner with custom silicon companies like Marvell.

In other words, AI infrastructure is not something that can be completed by one company on its own. It is moving toward optimization by use case, combining multiple specialized companies.

My view: is the CPU becoming the lead role of the inference era, not a side role?

In my view, the CPU is moving back into an important position within AI infrastructure.

That said, this does not mean the CPU will handle all of the computation as it did long ago.

The GPU will continue to be at the center of large-scale matrix math and model execution. HBM is the important memory that lets the GPU reach its full performance. The network is the foundation that connects distributed compute resources. The IPU and DPU offload infrastructure processing.

Within all of this, I think the CPU is becoming something like a “conductor” that controls the whole system, distributes tasks, manages data movement, and supports the runtime environment for AI agents.

In particular, with AI agents, work is not a single inference call. It is a multi-step process.

- Search.

- Write code.

- Call external tools.

- Verify the result.

- Re-run if needed.

- Return to the user.

For this kind of workload, simply lining up many GPUs is not enough.

What matters is how efficiently you can run the whole system, including the CPU, memory, network, and storage.

In that sense, I see CPU price increases not just as a temporary supply-and-demand squeeze, but as a signal that the center of gravity in AI infrastructure is starting to shift.

What investors should watch

For an investor, this change is quite important.

Until now, AI infrastructure investment has tended to focus on Nvidia, HBM, advanced packaging, and data center power.

But once we are in the era of inference and AI agents, the field of view widens.

- Intel, AMD, and Arm, which have CPUs.

- Amazon, which has its own in-house cloud CPU.

- Marvell and Broadcom, which are strong in custom silicon.

- Companies like SiFive, which hold RISC-V CPU IP.

- The surrounding infrastructure including memory, storage, networking, and DPUs/IPUs.

All of these have the potential to grow in importance as AI agents are actually deployed in real-world applications.

From here, the question becomes who can build the system that runs inference cheaply, at scale, and stably.

I think investment themes will move toward that.

Another view

That said, we should not oversimplify the CPU’s reevaluation.

First, this does not mean the GPU is becoming less important. The GPU remains crucial in inference too, especially for large models and high-throughput inference, where GPUs and dedicated accelerators sit at the center.

Second, CPU price increases include short-term supply constraints. Intel’s factory capacity, supply and demand on older nodes, and inventory drawdowns are also temporary factors at play.

Third, if dedicated chips for inference become widespread, CPU demand for every inference workload will not necessarily rise in a straight line. Google’s TPU, AWS’s Trainium/Inferentia, Marvell and Broadcom’s custom ASICs, Nvidia’s new CPU/GPU integration strategy — the competition is complicated.

For these reasons, it is risky to read this as “the CPU alone becomes the next lead role.”

A more accurate view, I think, is that the value of AI infrastructure is widening from the GPU alone to the entire system, including CPU, GPU, memory, networking, and storage.

Wrap-up

The main battleground of AI infrastructure is moving from the question of how many training GPUs you can secure to the question of how efficiently, at what scale, and how stably you can run inference and AI agents.

In the middle of this change, the CPU is starting to take on an important meaning again.

In an era when AI agents carry out complex tasks, the CPU becomes the conductor that controls the whole system.

Intel’s data center CPU demand, Meta’s adoption of AWS Graviton, the Google–Intel CPU/IPU partnership, SiFive’s RISC-V funding round, and the Google–Marvell talks on inference chips — all of these show that the topic of AI infrastructure investment is widening from a GPU-only focus to optimization across the entire system.

The shift to the “inference era” does not mean the era of the GPU is ending.

Rather, I think it means that the era when AI infrastructure could be discussed by GPUs alone has begun to end.

Join the conversation on LinkedIn — share your thoughts and comments.

Discuss on LinkedInRelated Posts

OpenAI's Independence Strategy and the Path to IPO: 'Revenue Gross-Up' Concerns from the Microsoft Partnership Amendment, and an Investor View

The New World Driven by Multi-AI Agents — When AIs Review, Complement, and Negotiate with Each Other