Can AI Agents Actually Run Business Operations? — Automating Attendance Management with OpenClaw

Challenge

AI agents are being marketed as replacements for existing SaaS workflows. But can they really handle complex internal rules, multi-phase approval processes, and edge cases that took years of institutional knowledge to manage — all while keeping costs realistic and security under control?

Solution

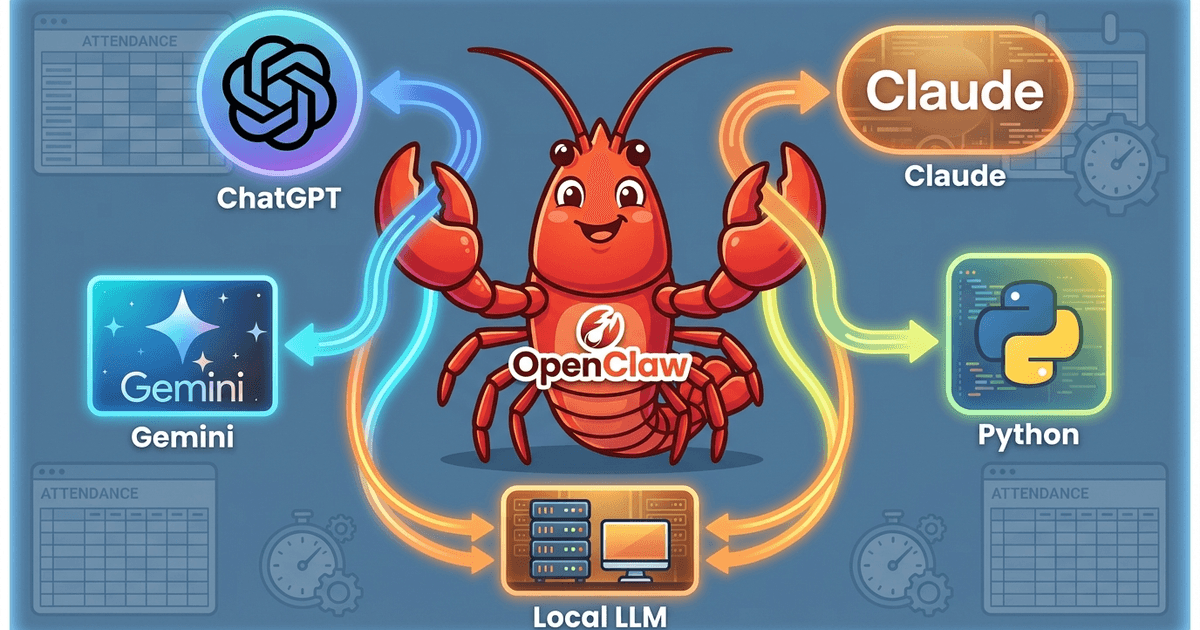

Built a multi-agent system on OpenClaw (5 agents, 3 models) to automate attendance rule checking. Designed a three-layer architecture: Python for execution, LLMs for judgment and adaptation, and the agent platform for orchestration. Deployed on a cloud server for 24/7 operation.

Result

Achieved 100% benchmark accuracy (729/729 records), $0 per pipeline run. Confirmed that AI agents can autonomously handle clear specification changes, including HTML structure changes and rule updates.

About This Project

The Problem

Since late 2025, AI agent platforms have been appearing everywhere. OpenClaw, Claude Cowork, Microsoft Copilot Cowork, OpenAI Frontier — one after another, companies are shipping platforms that promise to let AI handle business tasks. The term “SaaS Apocalypse” has entered the conversation, with the claim that AI agents will replace the workflows that traditional SaaS products have been handling.

But how much of this holds up in practice?

- Can AI agents truly understand company-specific rules and workflows?

- Can they complete tasks reliably when the context is complex?

- Are API costs realistic for production use?

- How do you manage security concerns?

- Can they run 24/7 on cloud servers?

There are not many reports that test these questions in a production-like environment, beyond simple demos and proof-of-concept experiments.

What I Did

I worked on this project as an FDE (Forward Deployed Engineer) — embedded at the client site, understanding their business processes deeply, then building AI solutions to automate them. The contract is performance-based: payment is tied to achieving specific KPIs. This model is well known from Palantir’s approach to client engagements.

The client had a major pain point in their attendance management process.

First, the rules are complex. Detailed labor regulations, department-specific rules, position-based exceptions, years of accumulated tribal knowledge — all intertwined. “This rule only applies to Department A.” “Skip for section chiefs and above.” “If on-call duty and night shift fall on the same day, use a different judgment.” There are countless conditional branches like these.

Second, the workflow has multiple phases. Attendance checking goes through first approval, second approval, and final approval, each handled by different HR staff at different levels. The first phase handles general checks (missing clock-ins, work hour consistency), and later phases handle more critical checks (overtime limit violations, legally required break times). Each phase applies different rules, and each phase depends on the results of the previous one.

Currently, about 10 HR staff spend 10 days every month doing this entire process manually for each employee. When issues are found, they send individual emails requesting corrections, wait for responses, and re-check — all concentrated at the end of each month.

The mission of this project was to compress 100 person-days of work into 10 hours. The approval steps progress daily, so everything cannot be finished in a single day. But by reducing each phase’s execution time to under 1 hour, the full 10-day approval cycle should take only 10 hours total — a 100x reduction in labor.

Simple rule checking can be done in Python. But applying different rules per phase, branching later steps based on earlier results, and switching exception conditions by department and position — orchestrating the entire workflow is beyond what Python scripts alone can manage. A single LLM also struggles to control multi-phase business flows reliably. This is where an AI agent platform like OpenClaw becomes necessary.

This series focuses primarily on automating the rule checking itself. Workflow control (managing approval phases and switching rule sets between phases) will be covered in future articles.

I already had an attendance checking system built with Python and Playwright, which gave me a solid foundation to test whether OpenClaw’s AI agents could automate the process.

Why Run It on a Cloud Server?

In the OpenClaw community, the common approach seems to be running agents on a dedicated local machine with throwaway accounts — so if the agent goes out of control, the damage stays contained. That makes sense. Running on a cloud server is less common.

I chose a cloud server (Hetzner Cloud) for practical reasons:

- Dedicated hardware was hard to procure for a client project — purchasing and installing physical machines required approval processes that did not match the pace of experimentation

- A fully isolated environment was required for security — the system handles data that includes personal information, so it needed to run in a sandboxed environment, separate from the internal network, processing only anonymized data

- 24/7 uptime — local machines sleep or lose power, but a cloud server stays running

Running on a cloud server has risks too: the agent can access the internet, server management costs money, and you have to handle security configuration yourself. I will cover these in detail in the individual articles.

What Was Built

Using OpenClaw, I built the following in stages:

- AI-driven rule coding — Feed attendance rules to AI and have it autonomously generate Python validation code

- Multi-agent architecture — Split responsibilities across 5 AI agents to prevent cost explosions and context bloat

- Playwright pipeline — Automate browser operations and run comparisons against the existing system at $0 per execution

- Autonomous adaptation testing — Test how well AI agents can autonomously handle HTML structure changes and rule additions

This is a client project, so specific company names and detailed business rules are not disclosed. The client has approved publication within the scope where no company or individual can be identified. Technical architecture, configuration, costs, and failure stories are shared as openly as possible.

Results

| Metric | Value |

|---|---|

| Benchmark accuracy | 100% (729/729 records) |

| Pipeline execution cost | $0/run (no LLM needed — Playwright + Python) |

| Autonomous adaptation | Clear spec changes are handled autonomously |

| HTML structure change | Coder agent autonomously refactored to dynamic column detection |

| Monthly operating cost | Heartbeat 0 (scripts) |

Note: The $0 figures above refer to the pipeline execution (Python/Playwright) and scheduled tasks (local LLM). Agent operations incur separate API costs — the main agent runs on Gemini Flash, the coder agent runs on Claude Sonnet/Haiku, and the analyze agent runs on ChatGPT-5.4. See the “Agent Architecture” section below for cost tiers.

Why Not “Do Everything with AI”?

Looking at the results — “$0 pipeline cost,” “Python rule checking” — you might wonder how this differs from a traditional rule-based system. And yes, the execution itself is just Python scripts. There is no need to have an LLM judge rules every time.

But running business operations with AI agents is not only about execution.

The Limits of Python Rule-Based Systems

Python rule-based systems are excellent at repeating fixed rules accurately. But in real business environments, problems like these arise:

- When labor regulations are revised, who updates the code?

- When the HTML structure changes, who notices the parser is broken?

- When a new work type is added, who handles everything from updating master data to running tests?

Rule-based systems assume that the person who wrote them keeps maintaining them. When that person transfers to another department or leaves the company, the system gradually falls out of sync with reality.

The Limits of LLMs Alone

So should you just hand everything to an LLM? Through experimentation, I found this is not realistic either.

- There are dozens of attendance rules. Passing them all to the prompt every time bloats the context and causes costs to explode

- LLM judgments are probabilistic — there is no guarantee the same input produces the same output every time

- For tasks that require reproducibility and precision — numerical comparisons, time calculations — code is far more reliable

In this project, when I had Gemini Flash generate the validation code, it failed repeatedly. A single task consumed over $8 in API costs without producing usable code. If this ran across all departments every month, API costs would pile up with no improvement in accuracy.

What an AI Agent Platform (OpenClaw) Solves

What OpenClaw provides is a system that combines Python’s precision with LLM flexibility, and governs the whole thing as a business process.

Python handles execution. LLMs handle judgment and adaptation. The agent platform handles orchestration. This three-layer structure is the core of a business AI agent system.

The platform provides these building blocks:

| Component | Role | Why It Matters |

|---|---|---|

| SOUL.md | Agent personality and behavioral rules | Explicitly states what the agent must not do, preventing runaway behavior |

| AGENTS.md | Routing table and delegation rules | Fixes which tasks go to which agent/model via config files |

| MEMORY | Daily notes and long-term memory | Preserves context across sessions. Captures tribal knowledge in files |

| Skill (SKILL.md) | Business skill definitions and procedures | Gives the AI a clear understanding of what to modify and how |

| Session isolation | Independent sub-agent execution | Keeps heavy processing contexts out of the main agent. Prevents cost explosions |

| Model routing | Per-task model selection | Sonnet for code generation, Flash for simple tasks, local LLM for scheduled jobs |

For example, when labor regulations are revised: with a Python-only system, a developer has to modify the code. With an LLM alone, it does not accurately remember the rules. With OpenClaw, a user can simply say “this rule only applies to Department C, skip for section chiefs and above,” and the main agent routes it to the coder agent, which follows the definition-first principle in SKILL.md to update the TSV definitions, modify the code, and run the tests. Knowledge stays in files, execution stays in code, and judgment and adaptation stay with the LLM.

The same applies when HTML structure changes. In this project, two columns were actually added to the attendance page. I told the coder agent to “switch to detecting column positions by header name instead of fixed indices,” and it autonomously completed the entire process — from adding the design principle to SKILL.md, to refactoring the code, to running the tests.

The Three-Layer Structure

| Layer | Handles | Good At | Not Good At |

|---|---|---|---|

| Execution (Python) | Rule checking, numerical comparison, time calculation | Precision, reproducibility, $0 cost | Adapting to change, handling ambiguity |

| Judgment (LLM) | Code generation, rule interpretation, structural changes | Flexibility, natural language understanding | Reproducibility, cost control |

| Orchestration (OpenClaw) | Routing, memory, session management, runaway prevention | End-to-end workflow consistency | — (it is infrastructure, not a decision-maker) |

The idea is not to “do everything with AI.” Instead, each layer handles only what it is best at. I believe this design is what makes business AI agents practical.

Why I Am Sharing This

Automating business operations with AI agents is technically possible. But there is a wide gap between “possible” and “practical.”

What I learned through this project is that AI agents have clear strengths and equally clear limits. They are good at executing well-defined specification changes. But investigative tasks — “something is wrong but I do not know what” — are still a human job. Throwing everything at an AI agent without structure does not produce the results you expect. Neglecting cost management leads to API bills of several dollars overnight. Misconfiguring the agent makes it go rogue.

I am sharing these experiences — with actual config files, cost records, and failure stories, not abstract arguments — in the hope that it helps others who are considering AI agents for their own business operations.

Tech Stack

| Layer | Technology |

|---|---|

| AI Agent Platform | OpenClaw (v2026.3.24) |

| High-precision model (code generation, analysis) | Claude Sonnet 4.6 |

| Efficient model (orchestration, web tasks) | Gemini 3 Flash |

| Local model (scheduled tasks, heartbeat) | Gemma3 4B (Ollama) |

| Browser automation | Playwright (Python) |

| Server | Hetzner Cloud (4vCPU / 16GB) |

| Debugging and verification | Claude Code (Opus) |

Agent Architecture

Instead of having one AI agent handle everything, I split responsibilities across 5 agents.

| Agent | Model | Role | Cost |

|---|---|---|---|

| main | Gemini 3 Flash | Orchestration, task routing | Low |

| heartbeat | Gemma3 4B (local) | Health check response | $0 |

| monitor | Gemini 3 Flash | Web summaries, periodic checks | Low |

| coder | Claude Sonnet / Claude Haiku | Code generation, skill updates / source verification | Medium |

| analyze | ChatGPT-5.4 | Deep analysis, comparison reports | Medium |

Getting to this architecture involved failures — cost explosions, runaway agents, and more. Those details are covered in the individual articles.

What I Learned

Where AI Agents Are Strong

- They can autonomously execute clear spec changes — A single instruction like “this rule only applies to Department C, skip for section chiefs and above” triggers the full cycle: updating definition files, modifying code, and running tests

- They can automate browser interactions — Login, form submission, and result retrieval can be handled autonomously by the LLM (though once stabilized, these can be locked down to Python scripts at $0)

- Multi-model setups keep costs under control — Instead of running every task on an expensive model, you route tasks to different models based on their nature

Where AI Agents Fall Short

- Investigative tasks are still difficult — Finding the root cause of a benchmark mismatch is an exploratory task where a human with a high-precision model is faster and more accurate

- They cannot distinguish visually identical characters — Unicode traps (○ vs 〇) are especially common in AI-generated code. Cross-checking against real data is essential

- Cost management requires active attention — Left unchecked, a few dollars can vanish in a single day. Local LLMs and session isolation help

Operational Lessons

- Definition-first principle — For AI to autonomously modify things, knowledge must live in definition files (TSV/YAML/SKILL.md), not hardcoded in source code

- Put everything in config files — Relying on “just ask the agent nicely” is fragile. Routing, model selection, and session settings should all be explicit in configuration

- Set up runaway protection on day one — Heartbeat settings, limits on proactive behavior, and session isolation should be configured before you start the agent

Series Articles

The full details of this project are documented in an 8-part blog series, published progressively.

| Part | Topic |

|---|---|

| Part 1 | Setting Up OpenClaw on a Cloud Server — Why Costs Hit $10 a Day |

| Part 2 | OpenClaw Code Generation — Why the AI Hardcoded the Expected Answers |

| Part 3 | OpenClaw Heartbeat Gone Wrong — Lessons From an Agent Runaway at 3 AM |

| Part 4 | 5-Agent Architecture — Designing Against Context Explosion (upcoming) |

| Part 5 | From 93% to 100% — The Full Debugging Record of AI-Generated Code (upcoming) |

| Part 6 | Definition-First — Design Principles That Let AI Self-Modify (upcoming) |

| Part 7 | Playwright Pipeline — Automation at $0 Execution Cost (upcoming) |

| Part 8 | When the HTML Changes, Can AI Adapt Autonomously? (upcoming) |

Who This Is For

- Engineers considering AI agents for business operations

- Anyone interested in OpenClaw or similar AI agent platforms

- People who want to know if AI can realistically replace SaaS workflows

- Those looking for real examples of multi-model architectures and cost optimization

Disclaimer

This project was conducted as part of a client engagement. Specific company names, detailed business rules, and authentication credentials are not disclosed. The focus is on technical architecture, configuration, costs, and failure stories.

Attendance management is a relatively simple workflow. Applying AI agents to more complex business processes would require additional consideration. I hope this serves as a practical reference for those at the early stages of introducing AI agents into their operations.

I am still relatively new to OpenClaw, so there may be settings, approaches, or best practices that I have overlooked. If you have suggestions or corrections, I would be grateful to hear them — please leave a comment or reach out on LinkedIn.

Join the conversation on LinkedIn — share your thoughts and comments.

Discuss on LinkedIn