META FIT GenAI — When Generative AI Replaced the Entire GAN Pipeline

Challenge

Google had already shipped AI-powered virtual try-on in Google Shopping. Could the same generative AI technology replace the entire PASTA-GAN++ pipeline — solving the body diversity, processing speed, and infrastructure problems that GANs could not? The existing system also carried non-commercial licenses that blocked any path to production.

Solution

Replaced the entire GAN pipeline with Google's generative AI APIs. Built a dual-engine architecture using Gemini (Nano Banana) for person-to-person transfer and Vertex AI Virtual Try-On for product-to-person fitting. Validated across 28 test cases covering diverse body types, action poses, and complex garment patterns.

Result

Achieved dramatically superior results in body diversity, garment fidelity, and face quality — all with a single API key and zero GPU infrastructure. Confirmed that generative AI resolves the core limitations that made GAN-based virtual try-on impractical for production use.

From Part 5 to a New Chapter

In the original META FIT series, I documented a 20-year journey building a virtual try-on system — from photo booth concepts to GAN-powered image generation. Part 5 ended with honest conclusions: the system worked for standard body types, but body diversity, processing speed, and garment fidelity remained unsolved.

That was the GAN era. This is what happened when generative AI arrived.

The Turning Point: Generative AI Arrives

The migration started with a realization: generative AI had already made virtual try-on a production reality. Google Shopping’s AI try-on feature was generating realistic fitting images for diverse body types — the exact problem my GAN pipeline struggled with. Could the same technology replace PASTA-GAN++ entirely?

A subsequent license audit strengthened the case. A thorough review of the PASTA-GAN++ codebase revealed that five core components carried non-commercial licenses:

| Component | License | Role |

|---|---|---|

| PASTA-GAN++ | Non-commercial research | Try-on engine |

| StyleGAN2 (NVIDIA) | NVIDIA Source Code NC | Generator backbone |

| OpenPose (CMU) | Academic non-commercial | Pose detection |

| PF-AFN | Non-commercial research | Warping module |

| FlowNet2 | Research-only | Flow estimation |

The system could never become a commercial product without replacing every one of these components. This constraint became the catalyst for a complete rethink.

The New Architecture: API Instead of Pipeline

The old system required a multi-stage pipeline: OpenPose for pose detection, Graphonomy for body segmentation, PASTA-GAN++ for image generation — all running inside a Docker container with NVIDIA GPU access.

The new system replaced all of it:

Old: Photo → OpenPose → Graphonomy → PASTA-GAN++ (GPU) → Result

New: Photo + Clothing + Prompt → Gemini API → ResultTwo distinct engines serve different use cases:

| Use Case | Engine | Input |

|---|---|---|

| Product try-on (EC sites) | Vertex AI Virtual Try-On | Person photo + product image |

| Person-to-person transfer | Gemini (Nano Banana) | Target person + source person |

Both are commercially licensed. Both require only an API key (or GCP project). No GPU infrastructure needed.

Results: The Body Diversity Breakthrough

The most significant improvement is in body diversity — the problem that defined the limitations of the GAN approach.

PASTA-GAN++ was trained primarily on slim models. When presented with other body types, garments collapsed into visual noise and the system consistently “slimmed” subjects toward the training distribution.

Generative AI has no such training bias. It understands body shape from the input image and adapts garments accordingly:

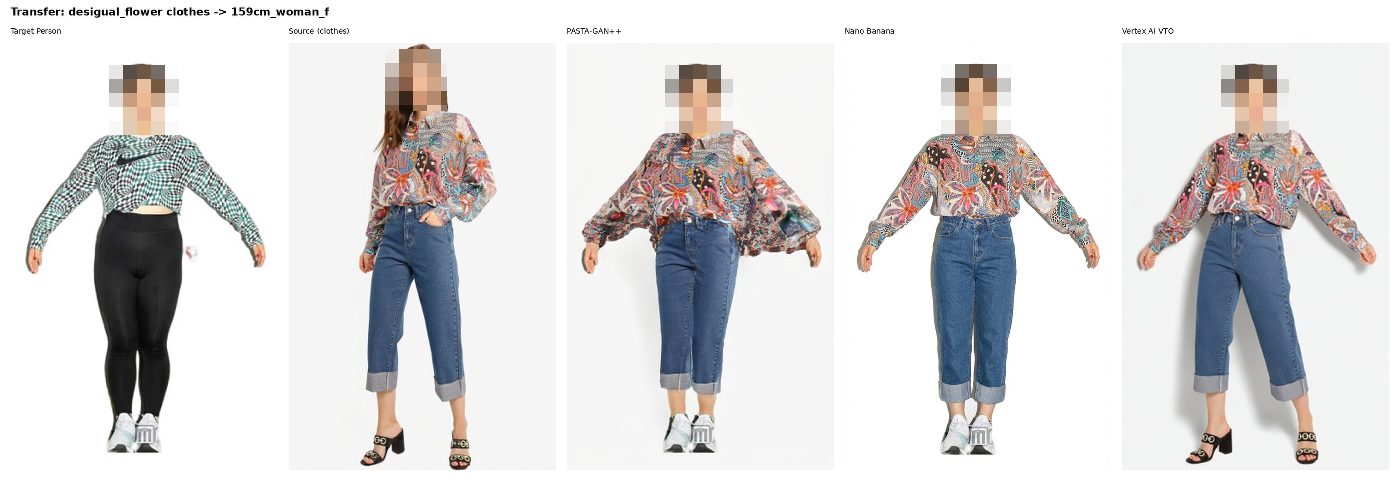

Left to right: input images, PASTA-GAN++ result (garment collapsed, body slimmed), Nano Banana result (faithful body shape, clean garment), Vertex VTO result.

The difference is not incremental — it is generational.

What Each Engine Does Best

Through 28 test cases across three phases, clear patterns emerged:

Nano Banana (Gemini) excels at:

- Person-to-person transfer (extracting clothing from one person and applying it to another)

- Body type preservation (faithfully maintaining the subject’s actual proportions)

- Action poses (dancing, punching, stretching — even with extreme motion)

- Cross-gender dressing (adapting garments across body types naturally)

Vertex AI VTO excels at:

- Product-to-person fitting (flat product images applied to a person photo)

- Color and design fidelity (reproducing exact shades and garment structure)

- Shoes and accessories (item-level precision)

Both struggle with:

- Safety filters blocking legitimate fashion content (exposed shoulders, sportswear)

- Extremity pose changes (hands and feet sometimes shift position)

Technical Stack

| Layer | Technology |

|---|---|

| Try-on Engine (Transfer) | Gemini 3 Pro Image Preview (Nano Banana) |

| Try-on Engine (Product) | Vertex AI Virtual Try-On (virtual-try-on-001) |

| Authentication | Gemini API Key + GCP Service Account |

| Image Processing | Pillow, OpenCV |

| Pose Analysis (optional) | MediaPipe Pose + Face Mesh |

| Language | Python 3 |

| Source Code | github.com/matu79go/metafit |

Development Process in Detail

The full technical deep-dive — covering the migration rationale, Gemini API implementation, prompt engineering, systematic testing across 28 cases, and the three-engine comparative analysis — is documented in a 3-part blog series:

| Part | Topic |

|---|---|

| Part 1 | From GANs to Generative AI — Why and How the Migration Happened |

| Part 2 | Nano Banana Virtual Try-On — 16 Test Cases and What They Revealed |

| Part 3 | The 3-Engine Showdown — PASTA-GAN++ vs Nano Banana vs Vertex AI VTO |

Connection to the Original Series

This project builds directly on the work documented in META FIT (GAN era) and its 5-part blog series. The original series covers GAN theory, the PASTA-GAN++ implementation, pose estimation, and body measurement — the foundation that made this generative AI migration possible.

Join the conversation on LinkedIn — share your thoughts and comments.

Discuss on LinkedIn