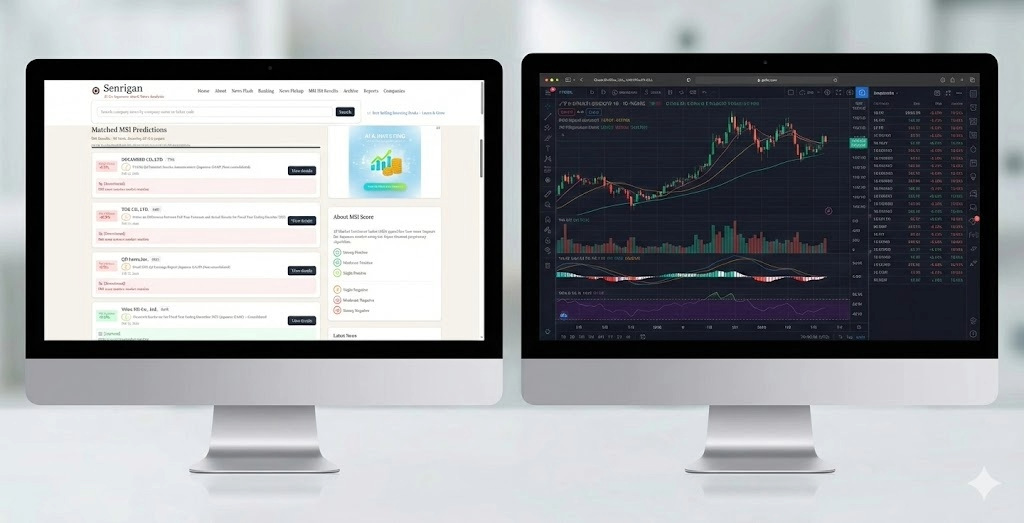

Senrigan — AI That Reads Stock News and Predicts Tomorrow's Price Movement

Challenge

Hundreds of corporate disclosures and news articles are published every trading day. No individual investor can read them all and make informed decisions in time.

Solution

Built a web service that feeds 5 types of corporate data and news into a fine-tuned LLM to predict next-day stock price movements automatically.

Result

The system ran daily predictions, publishing results in both Japanese and English. The service has since been taken offline.

Why I Built This

In 2023, an experiment by UK financial comparison site Finder made headlines. A portfolio of 38 stocks selected by ChatGPT returned +4.9% over 63 days, while the UK’s top 10 most popular funds (HSBC, Fidelity, etc.) averaged -0.8% over the same period. Over two years, the gap widened to +41.97% vs +27.63% — a 14-point difference.

Reference: ChatGPT can pick stocks better than your fund manager (CNN Business)

This was a limited experiment, and Finder themselves cautioned against using ChatGPT for actual investment decisions. But it demonstrated something significant: LLMs can “read” corporate news and extract investment-relevant insights.

“Could the same approach work for Japanese stocks?”

That question was the starting point for Senrigan.

The Problem Senrigan Solves

Information Overload for Individual Investors

In the Japanese market, hundreds of timely disclosure filings (via TDnet — Japan’s official corporate disclosure system) and news articles are published every day. Earnings reports, forecast revisions, dividend announcements — each can move stock prices, but processing all of them manually takes an enormous amount of time.

Limitations of Traditional Approaches

Traditional stock prediction relies on numerical time-series models like LSTM and ARIMA. These models have a fundamental limitation: they cannot process natural language information.

When a company announces “operating profit revised upward by 51%,” understanding its impact on the stock price requires more than just numbers. You need to grasp the context of the news, industry conditions, and market expectations.

Senrigan’s Approach

Senrigan tackles this challenge by fine-tuning an LLM to combine numerical corporate data with natural language news, predicting next-day stock price movements.

How It Works

Input Data (5 Types Combined)

For each news item, Senrigan combines five types of data to make predictions.

| Data Type | Contents | Example |

|---|---|---|

| Company Info | Industry, market cap, business overview | Chemicals, JPY 28.9B, major adhesive manufacturer |

| News | Timely disclosures, earnings reports | ”Operating profit revised upward by 51%, new record high” |

| Stock Prices | Last 5 trading days OHLCV | Open, High, Low, Close, Volume |

| Financials | 2 years of revenue, margins, ROE | Revenue JPY 41.3B, margin 9.26%, ROE 8.38% |

| Macro Indicators | CPI, GDP growth, policy rate | CPI 107.95, policy rate 0.75%, USD/JPY 151.37 |

Output (3 Prediction Values)

Prediction Result (JSON format):

{

"prev_close_to_next_open": "+1.2%", ← Overnight movement

"prev_close_to_next_close": "+2.5%", ← Full-day movement

"next_open_to_close": "+1.3%" ← Intraday movement

}In addition, the LLM generates analysis text explaining the reasoning behind each prediction, in both Japanese and English.

System Architecture

News Published → Data Collection → AI Prediction → Data Sync → Web Display

(PHP/MySQL) (LLM API) (Python) (Next.js)┌───────────────────┐ ┌──────────────────┐ ┌──────────────┐

│ meloik │ │ assetai_firebase │ │ stockSite │

│ │ │ │ │ │

│ ・News collection│ │ ・MySQL → │ │ ・Prediction │

│ ・Data integration│ ──►│ Firestore sync │ ──►│ display │

│ ・LLM prediction │ │ ・Diff updates │ │ ・JP/EN │

│ ・Translation │ │ ・Cost optimize │ │ │

│ │ │ │ │ │

└───────────────────┘ └──────────────────┘ └──────────────┘

VPS (PHP/MySQL) VPS (Python) Vercel (Next.js)Three subsystems work together, fully automated from news publication to prediction display.

Technical Challenges

Challenge 1: Building a Custom LLM — The Fine-tuning Journey

The core of Senrigan is an LLM specialized for stock prediction. A general-purpose LLM does not produce sufficient accuracy, so I fine-tuned a model with a custom dataset.

Finding the right approach required three phases of experimentation.

| Phase | Environment | Model | Result |

|---|---|---|---|

| 1. Local GPU | RTX 3060 (6GB) | ELYZA Llama-3-JP-8B | Out of VRAM for training |

| 2. Google Colab | T4 GPU (15GB) | ELYZA, LLM-jp, rinna | Insufficient accuracy |

| 3. OpenAI API | Cloud | gpt-4o-mini | Success — training completed in 8 minutes |

Phase 1 (Local): I attempted to train an 8B parameter model on my laptop GPU (6GB VRAM). Inference worked, but training requires several times more memory than inference, so it was not feasible.

Phase 2 (Google Colab): Using LoRA (Low-Rank Adaptation), I experimented with three open-source models. Due to memory constraints, I had to significantly reduce the input data, which hurt accuracy. However, this phase proved that teaching new knowledge to an LLM through fine-tuning is possible.

Phase 3 (OpenAI API): I shifted to OpenAI’s fine-tuning API, which allowed all 5 data types as input and completed training in just 8 minutes. With 1,009 custom training samples, the resulting model achieved practical prediction accuracy.

Challenge 2: Multilingual Support — Choosing a Translation LLM

Senrigan serves content in both Japanese and English. I used LLMs for translation, but selecting the right provider proved challenging.

Initially, I chose DeepSeek for its low cost. However, several problems emerged:

- Speed: Responses took minutes, causing frequent timeouts

- Language mixing: Chinese characters appeared in Japanese-to-English translations

- Data leakage: The model sometimes output parts of the input data directly

Switching all LLM processing to OpenAI (ChatGPT) resolved these quality and stability issues.

Lesson learned: LLM providers must be evaluated holistically — cost alone is not a sufficient criterion. Language support quality matters enormously for multilingual applications.

Challenge 3: MySQL to Firestore Migration

Syncing data from the backend MySQL database to Firestore (a NoSQL database for the frontend) revealed fundamental differences between relational and document-oriented databases.

- Index design: Composite indexes that are trivial in MySQL must be created manually one by one in Firestore

- Preventing duplicate writes: Since Firestore charges per write operation, I built a custom differential update system using UNIX timestamps

- Cost optimization: Disabling unnecessary sync jobs and tuning cache strategies to reduce Firebase costs

Tech Stack

| Layer | Technology | Purpose |

|---|---|---|

| AI Prediction | OpenAI gpt-4o-mini (Fine-tuned) | Stock price movement prediction |

| Prediction Reasoning | OpenAI gpt-5-nano | News analysis and reasoning text |

| Translation | OpenAI gpt-5-mini | JP→EN translation, news summarization |

| Backend | PHP / MySQL | Data collection, integration, batch processing |

| Data Sync | Python / Firestore | MySQL→Firestore differential updates |

| Frontend | Next.js / Vercel | Web UI (ISR, bilingual JP/EN) |

| Infrastructure | Sakura VPS × 2 | Batch processing and data sync servers |

Technologies Explored

During the fine-tuning process, I experimented with and validated the following techniques.

| Technology | Overview | Where Used |

|---|---|---|

| LoRA | Lightweight fine-tuning that only trains low-rank difference matrices | Training 3 models on Colab |

| 8-bit Quantization | Halves memory usage while preserving model quality | Model loading on local/Colab |

| QLoRA | Combination of quantization + LoRA | Effective training method on Colab |

| GGUF Conversion | Converting HuggingFace models for CPU inference | Local inference testing |

| SFTTrainer | HuggingFace TRL library’s supervised fine-tuning tool | Training execution on Colab |

Training Data Design

Key characteristics of the custom training dataset.

| Attribute | Value |

|---|---|

| Sample Count | 1,009 |

| Token Count | ~1.3 million tokens |

| Data Structure | 5 data types integrated into a single JSON |

| Labels | Actual next-day price movement rates (3 types) |

| Target Market | Tokyo Stock Exchange (Prime, Standard, Growth) |

News and IR information is sourced from TDnet (Timely Disclosure network) and other publicly disclosed corporate information. Please always verify with original sources for accuracy.

Development Process in Detail

I documented the full development journey in an 8-part blog series.

| Part | Topic |

|---|---|

| Part 1 | Introduction and Overview |

| Part 2 | Local GPU Challenge and Setbacks |

| Part 3 | LoRA and Quantization Explained |

| Part 4 | Stock Prediction on Google Colab |

| Part 5 | OpenAI API Fine-tuning |

| Part 6 | Training Data Design |

| Part 7 | Translation LLM Selection — From DeepSeek to ChatGPT |

| Part 8 | MySQL to Firestore Migration and Production |

What I Learned

Data Quality Determines Model Performance

After testing 3 open-source models and 1 commercial model — 4 approaches in total — the most important factor turned out to be not “model size” but “input data quality and completeness.” The model trained on full data via OpenAI API vastly outperformed models trained on truncated data on Colab.

LLM Fine-tuning Is Accessible to Individual Developers

Fine-tuning is not reserved for large corporations. With OpenAI’s API, I built a custom model from 1,009 training samples in about 8 minutes, for a few dollars. The key investment is in preparing high-quality training data.

Evaluate LLM Providers Holistically

Choosing based on cost alone leads to quality problems down the road. For multilingual applications in particular, the language balance of a model’s training data directly affects output quality. Stability, quality, and cost must be weighed together.

Disclaimer

Predictions generated by this service are automatically produced by LLMs and do not constitute investment advice. All investment decisions are your own responsibility. News and IR information is sourced from TDnet (Timely Disclosure network) and other publicly disclosed corporate information. Please always verify with original sources for accuracy.