How I See Meta's AI Strategy as an Investor — Business Realignment, Massive Capex, and the Value of Data Center Assets

When looking at Meta’s AI investments, attention naturally goes to AI models like Llama or the success of in-house chip development. Over the past few years, Meta has been deploying capital across a wide range — AI models, semiconductors, VR/metaverse, and research organization restructuring — all in parallel.

But if you step back and look at the recent moves from a distance, a different picture emerges. What Meta is really betting big on may not be AI models themselves, but rather the computing resources and data center assets that will serve as the foundation of the AI era.

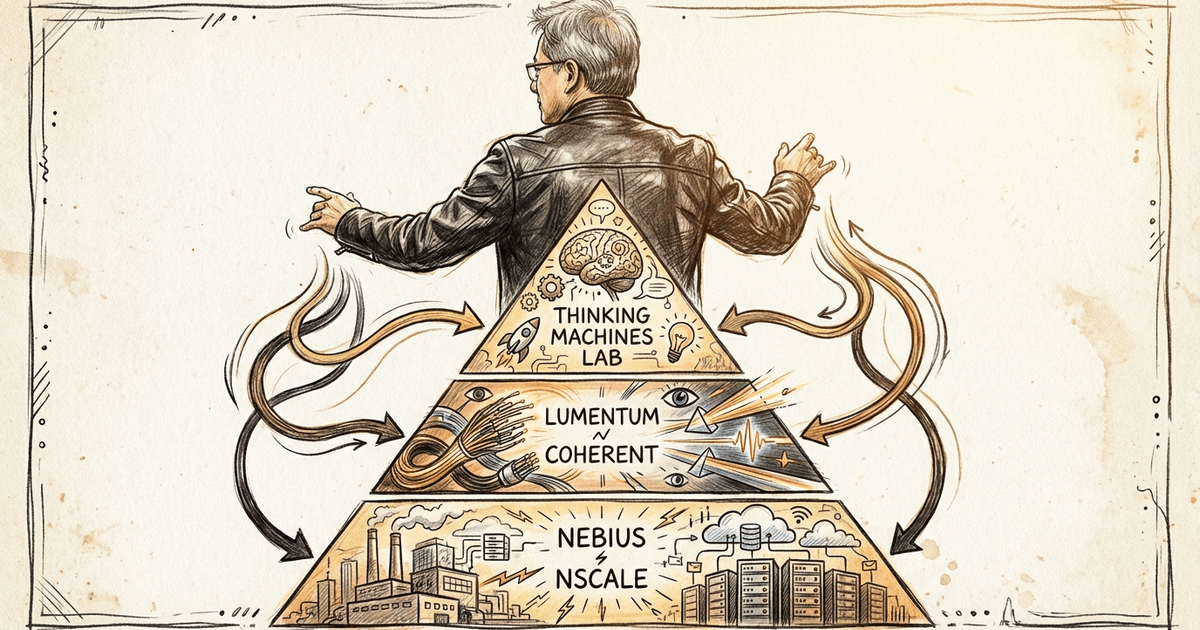

Meta has indicated capex guidance of $115–135 billion for 2026, citing AI infrastructure investment as the primary driver. Reuters has reported that Meta’s AI-related data center investment is planned to reach approximately $600 billion by 2028. Meta is also securing external resources — up to $27 billion in AI compute capacity from Nebius, and leasing Google’s TPUs as well.

The key question here is: to which areas, and in what form of assets, is Meta allocating its capital?

Models can pay off big if you win, but the residual value when you lose is hard to predict.

In-house chips are similar — if they cannot achieve sufficient competitiveness, they may remain as internally optimized components and nothing more.

Data center assets, on the other hand — encompassing power, land, cooling, and GPU deployment — tend to retain value more reliably as long as AI demand continues. This is where the economic logic of Meta’s recent capital allocation seems to lie.

Key Points to Understand First

- Meta expects 2026 capex of $115–135 billion

- Reuters reports that Meta’s AI data center investment is planned to reach approximately $600 billion by 2028

- Meanwhile, AI models, in-house chips, and VR/metaverse have all seen delays, downsizing, or restructuring

- Data center investment alone continues to accelerate

- What this reveals is that Meta is betting more heavily on computing infrastructure that retains value as long as AI demand persists than on winning any single model race

Meta’s recent moves can look like confusion at first glance. But while individual news items may not tell a clear story, looking at capital allocation as a whole reveals a fairly consistent stance. Reviewing both Meta’s official IR and the Reuters reports makes this direction quite clear.

1. Meta Continues to Invest in AI Models, but Struggles to Land a Decisive Win

Key Points

- Llama 4 fell short of expectations during development, and its release was delayed

- Behemoth was also postponed due to performance concerns

- The new model “Avocado” has been pushed back as well

Details

Meta has established a significant presence in the open model camp through the Llama series. However, looking at the recent trajectory, things have not been entirely smooth on the model front.

In April 2025, Reuters reported that Llama 4 fell short of Meta’s expectations on technical benchmarks in reasoning and math during development, delaying its release. The flagship model Behemoth was postponed due to capability concerns. Then in March 2026, the rollout of the new model “Avocado” was also delayed to at least May.

This does not mean Meta has abandoned AI model development. It will likely remain an important pillar going forward. But from an investor’s perspective, the concern is that the model race carries high uncertainty relative to the massive investment required. If you win, the payoff is large — but if you fall behind, there is no guarantee that the investment will translate into lasting assets.

The commoditization of AI and the rapid rise of Chinese open-source LLMs, which have made it harder to generate profits, are also contributing factors.

2. In-House Chips Appear to Be Shifting from an Independence Strategy to a Pragmatic One

Key Points

- Meta had previously envisioned in-house AI chips to reduce NVIDIA dependency

- But some early efforts were slower than off-the-shelf products and were scrapped

- Development continues, but the original ambitious vision has been tempered

Details

Meta has long tried to reduce its NVIDIA dependency through in-house AI chips. In April 2023, Reuters reported that Meta was internally planning more ambitious custom chips targeting both training and inference. But by September of the same year, some early chips had proven slower than off-the-shelf alternatives, and several AI chips were scrapped.

Since then, Meta began testing its first in-house AI training chip in 2025 and published a new MTIA roadmap in 2026. So it has not fully retreated. However, Meta has also signed a multi-year contract to lease Google’s TPUs. What this tells us is that Meta has shifted its focus from “achieving full self-sufficiency through in-house chips” to “how to secure overall computing resources.”

From an investor’s perspective, this shift makes sense.

Building semiconductors in-house sounds attractive, but the difficulty of achieving competitive advantage is extremely high. If external resources like the Google partnership can be leveraged, then the viability of Meta’s AI strategy is secured either way. Prioritizing economic rationality, Meta’s current position looks closer to “pragmatic compute procurement” than “ambitious vertical integration.”

3. VR and Metaverse Have Retreated from Future Centerpiece to Restructuring Target

Key Points

- Reality Labs has recorded large losses over many years

- Headcount reductions have continued since 2025

- Horizon Worlds has also scaled back its VR-centric rollout

Details

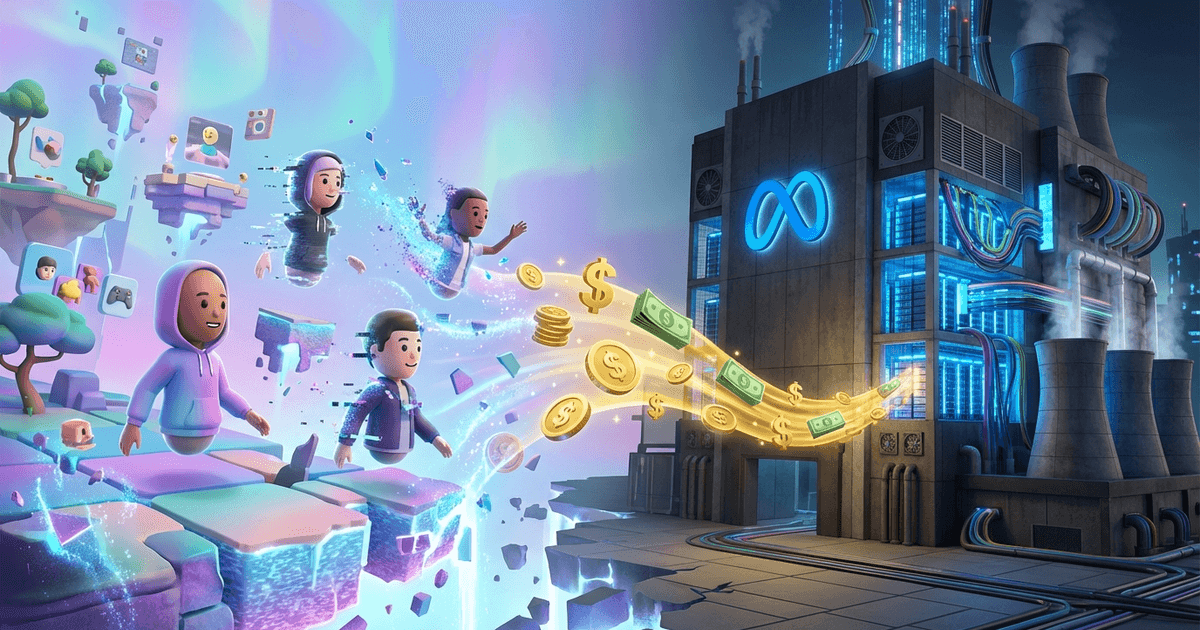

Looking back at Meta’s long-term strategy, VR and metaverse were enormous themes. But the recent moves are quite pragmatic.

Reuters has reported that Reality Labs has accumulated over $60 billion in losses since 2020. In April 2025, headcount reductions were made in Reality Labs-related divisions, and in January 2026, approximately 10% of the division was cut.

Horizon Worlds is also telling. In March 2026, Wired reported that Meta was shutting down Horizon Worlds on Quest and shifting to a mobile-first approach. Meta subsequently walked back the full shutdown somewhat, but at the very least, the VR-centric expansion phase appears to be over.

The important point here is not whether Meta has completely abandoned VR. What matters is the management decision that even areas that have received years of capital investment will be scaled back if returns seem unlikely. In other words, Meta has become a more capital-efficiency-conscious company than it used to be.

4. Headcount and Research Organizations Are Also Shifting from “Broad Coverage” to “Focused Support”

Key Points

- Meta carried out major layoffs in 2022 and 2023

- AI-focused organizational restructuring has continued since

- Recent reports suggest additional cuts are being considered to fund AI investment

Details

Meta laid off approximately 11,000 people in November 2022 and another roughly 10,000 in spring 2023 — the so-called “year of efficiency.” Organizational restructuring has continued since then. In June 2025, Reuters reported that Meta launched Superintelligence Labs and reorganized its AI division, reportedly in response to poor reception of Llama 4 and the departure of key talent.

Then in March 2026, Reuters reported that Meta was considering over 20% headcount reductions to support AI infrastructure investment. Meta called this reporting speculative, but the market’s reading is straightforward: Meta is not adding headcount across the board for AI, but rather compressing other cost structures to prioritize AI infrastructure.

This is not simply downsizing — it can be seen as organizational allocation moving in the same direction as capital allocation. For investors, watching where Meta cuts and where it protects reveals management’s true priorities.

5. Data Center Investment Alone Continues to Strengthen

Key Points

- 2026 capex up to $135 billion

- Reuters reports approximately $600 billion in DC investment planned through 2028

- External resource procurement is also advancing — up to $27 billion contract with Nebius, Google TPU leasing

- Meta appears to be positioning itself not just as a model company, but as an owner of computing resources

Details

As we have seen, Meta is going through trial and error and restructuring across models, chips, VR, and organizational structure. But among all of these, data center investment alone stands out as exceptionally strong.

According to Meta’s official IR, the 2026 capex outlook is $115–135 billion. Reuters reports that Meta plans approximately $600 billion in AI-related data center investment through 2028. Additionally, Meta has secured up to $27 billion in AI compute capacity from Nebius and is leasing Google’s TPUs.

The question for investors is: why is Meta betting so heavily on data centers? To me, the reasoning looks quite rational.

AI models can pay off big, but the residual value when you lose is unstable. In-house chips, unless they achieve NVIDIA-level competitiveness, offer no guarantee that the capital markets will continue to value them highly. Data center assets, however, are different in nature. A computing foundation encompassing power, land, cooling, networking, and GPU deployment is relatively easy to repurpose and preserves future optionality as long as AI demand persists. In other words, in what looks like an all-or-nothing AI race, Meta is tilting its capital toward comparatively “repurposable assets.”

Of course, Meta may not become a large-scale cloud provider in the same way as Microsoft or Amazon. But from an economic rationality standpoint, securing computing resources themselves is more defensible than betting everything on a single model or a single chip. This is a distinctly investor-minded approach.

My View as an Investor

On the surface, Meta naturally talks about AI models and superintelligence.

But looking at the reality of capital allocation, what Zuckerberg truly seems to prioritize is not “perfectly predicting which AI approach will win” but rather “securing assets that retain value under any scenario.”

There is a possibility that Meta falls behind on models. There is a possibility that in-house chips cannot replace NVIDIA. Areas that have received years of investment, like VR, may continue to shrink.

And yet, AI-oriented data centers and computing resources will remain. Moreover, as AI demand spreads across society, their value is likely to increase. Meta’s massive capex, then, can be viewed not as mere AI hype, but as an act of converting uncertain technology competition into infrastructure investment where asset value is more likely to endure.

As an investor, evaluating Meta’s AI strategy solely on whether it can beat OpenAI or Google in models and chips feels too narrow.

What we should be looking at instead is where Meta invests to maximize the durability of asset value in the AI era. In that sense, recent Meta looks quite clear-headed.

Join the conversation on LinkedIn — share your thoughts and comments.

Discuss on LinkedInRelated Posts

The Era of White-Collar Work Redesign, as Shown by AWS and Meta — How Should We Work and Invest in the Structural Shift from Labor Costs to AI?

What It Means That Nvidia-Backed Nscale Is Pursuing an 8GW Data Center Site