NVIDIA Strengthens Its Grip on AI Infrastructure Through Startup Investments

Looking at NVIDIA’s recent capital moves, the company’s focus clearly extends beyond semiconductors alone.

Through investments in startups and long-term supply agreements, NVIDIA is expanding into adjacent layers: AI computing capacity, data centers, optical interconnects, and AI startups. When you line up these moves together, it looks like NVIDIA is trying to strengthen its position across the entire AI infrastructure stack, not just GPU sales.

1. What We Can Confirm as Fact

1-1. Investments in Cloud and Data Center Companies

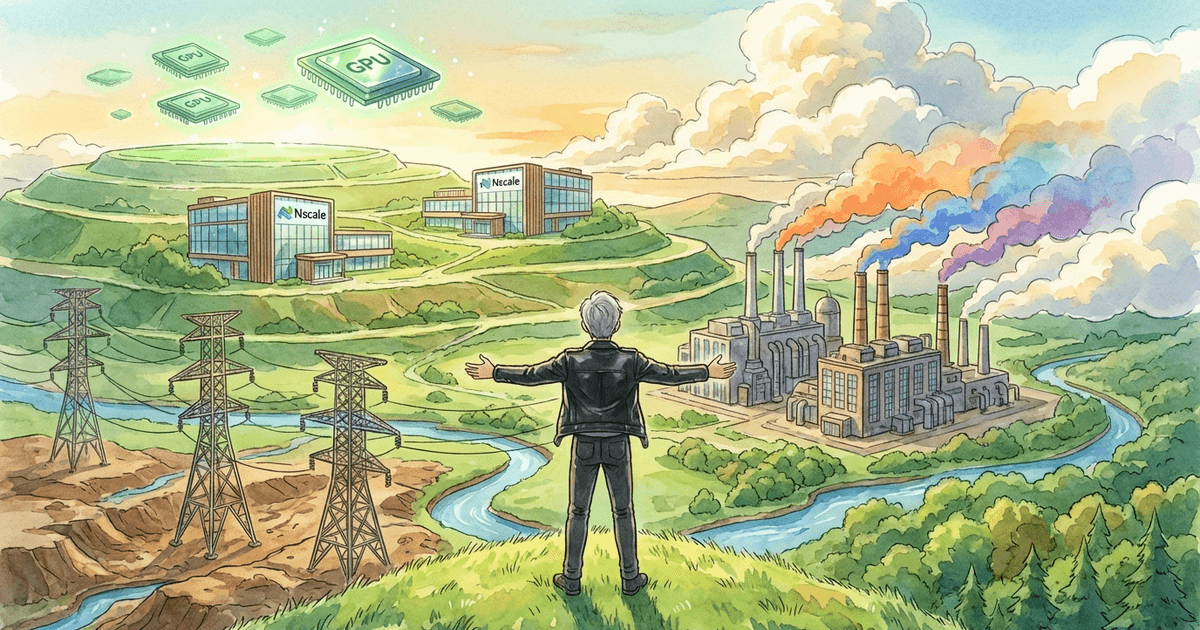

According to Reuters, NVIDIA invested $2 billion in Nebius, an AI cloud company, acquiring approximately 8.3% of its shares. Nebius has announced plans to build over 5GW of data center capacity by 2030.

Separately, Reuters also reports on Nscale as an NVIDIA-backed company. Nscale raised 14.6 billion. The company has its own data centers, GPUs, and software stack, and plans to expand capacity to meet growing demand.

These two cases show that NVIDIA is not just supplying GPUs — it is also involved in expanding the computing infrastructure where those GPUs are deployed. Capital is flowing not just into chip sales, but into the “containers” that absorb demand.

1-2. Involvement in the Optical Interconnect Layer

Reuters reports that NVIDIA plans to invest approximately $2 billion each in Lumentum and Coherent. The purpose is to expand R&D and manufacturing capacity in photonics.

As AI systems scale up, the challenge goes beyond compute performance. Moving large amounts of data between chips, racks, and clusters means the interconnect method itself affects performance and power efficiency. The Reuters reporting suggests NVIDIA treats this as a critical component.

1-3. Capital and Supply Relationships with AI Startups

According to Reuters, Mira Murati’s Thinking Machines Lab received a significant investment from NVIDIA and secured access to at least 1GW of next-generation Vera Rubin systems. NVIDIA also described this partnership on its official blog.

This confirms that NVIDIA is strengthening its presence not just as a chip supplier, but as a capital provider for AI startups. It can be read as an early move to establish both capital and supply ties with companies that could become major customers in the future.

1-4. NVIDIA’s Own Description Has Changed

In its annual report, NVIDIA describes its offerings not as standalone GPUs, but as “data center scale infrastructure” that includes GPUs, CPUs, DPUs, interconnects, switches, systems, networks, and software.

The report also states that data centers, energy, and capital are important for customers and partners to expand AI infrastructure, and that shortages in these areas could affect future performance.

This wording shows that NVIDIA itself does not see competition as just about chip sales. At least in its self-description, the company is already positioning itself as a provider of infrastructure that powers entire data centers.

2. What These Facts Show When Viewed Together

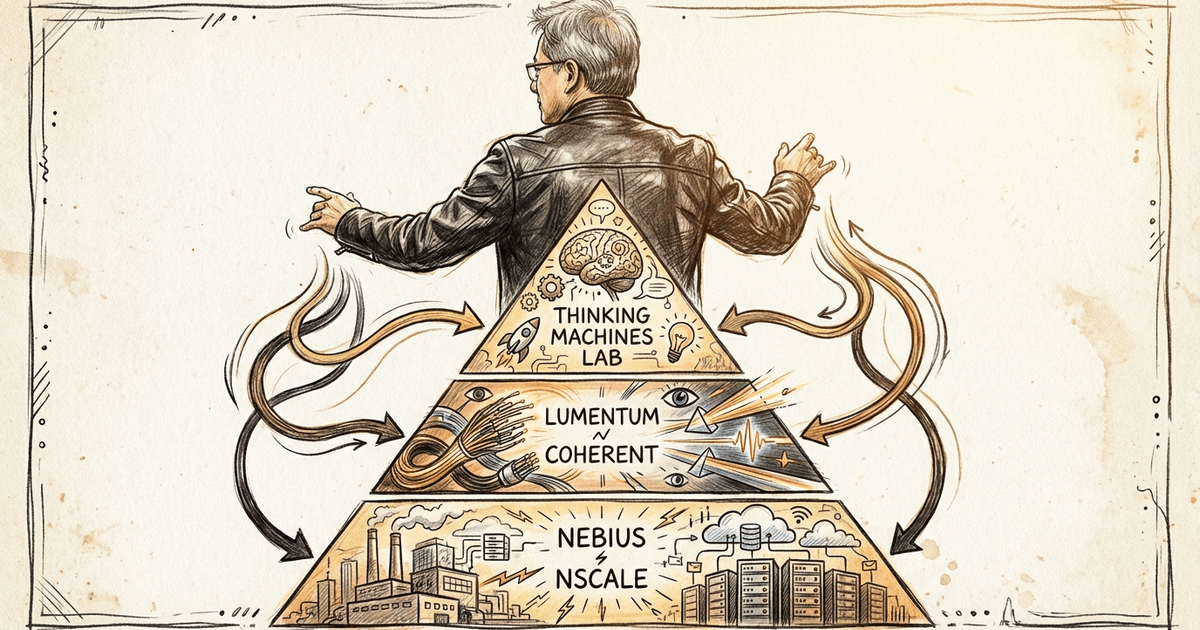

Organizing the above by layer:

- Cloud and AI computing capacity — Nebius, Nscale

- Optical interconnects — Lumentum, Coherent

- AI models — Thinking Machines Lab

Looking at this list, the investments are not scattered randomly. They appear to be placed along the main components of AI infrastructure.

3. My View: NVIDIA Is Reaching Beyond the GPU

From here, this is my own interpretation.

These recent moves look less like diversified investments and more like capital being placed at the main bottlenecks of AI infrastructure.

The competitive landscape for AI has become much more complex than before. Building high-performance GPUs is no longer enough. You also need the data centers to run them, sufficient power, efficient interconnects, and actual customers and AI companies that will consume the compute.

Because of this, I think what matters for NVIDIA is not just “selling the next GPU,” but broadly building the environment where those GPUs continue to be used.

For example, the recent moves can be read as follows:

- Nebius and Nscale — Expanding the AI computing capacity and data center infrastructure that will host GPUs.

- Lumentum and Coherent — Strengthening the optical interconnect and communication layer needed for large-scale AI compute.

- Thinking Machines — Building early capital and supply ties with an AI lab that will consume large amounts of compute in the future.

Viewed this way, NVIDIA does not seem to be pursuing classic vertical integration in the sense of owning everything itself. Instead, I think it is proactively engaging with the parts of AI infrastructure most likely to become bottlenecks, working to maintain a structure centered on its own GPUs.

4. An Alternative Reading

That said, it is worth being careful not to read these moves too one-sidedly.

NVIDIA’s investments and supply agreements do not necessarily mean “vertical integration as dominance.” The AI infrastructure market is still in an early stage of rapid expansion, and there are shortages across data centers, power, optical interconnects, and AI labs.

From that perspective, NVIDIA’s actions could be explained as natural investment behavior to support demand for its own products, rather than a strategy for control.

The companies NVIDIA invests in do not automatically fall under its control, either. Cloud providers and AI labs may expand toward AMD, custom chips, or alternative inference platforms depending on conditions. In fact, Reuters has reported on Cerebras as an NVIDIA competitor, and the AI infrastructure landscape is far from NVIDIA-only.

So concluding that “NVIDIA is trying to control everything” would be too strong at this point. It seems more accurate to say that NVIDIA is strengthening its presence in the important layers of AI infrastructure.

5. Summary

I think NVIDIA’s recent investments are easier to understand not as individual venture investments, but as moves to organize the broader AI infrastructure around itself.

Three key points:

- NVIDIA is investing capital not only in GPUs, but in the surrounding layers where those GPUs are used

- Its investments span critical layers through startups: cloud and AI computing capacity, optical interconnects, and AI models

- As a result, NVIDIA appears to be shaping AI infrastructure into a structure centered on itself

In other words, what NVIDIA is doing is not classic vertical integration where it owns everything. Rather, it is placing investees and supply partners within its sphere of influence, binding together the main layers of AI around its own ecosystem.

With large tech companies, NVIDIA is an important supplier but cannot dictate business or product strategy. With startups, however, NVIDIA may be able to exert stronger influence through investment, supply contracts, and technical dependency. The recent moves can be read through that lens.

There is also a different way to read this. These investments might be less about securing the future of AI infrastructure and more about placing NVIDIA GPUs into investee companies at scale — creating demand ahead of time and boosting its own revenue.

In fact, many of NVIDIA’s investees are also its customers. So this can be seen as ecosystem building, or as a circular structure where demand supports itself.

I do not think we need to settle on one interpretation right now. But at a minimum, it is becoming quite clear that NVIDIA is moving beyond the boundaries of a semiconductor company and using a network of startups to extend its influence across all of AI infrastructure.

NVIDIA’s recent actions suggest an attempt to reorganize the order of AI infrastructure around itself, going well beyond chips. How we read that shift will matter as we watch AI infrastructure evolve.

Related Posts

What It Means That Nvidia-Backed Nscale Is Pursuing an 8GW Data Center Site

How I See Meta's AI Strategy as an Investor — Business Realignment, Massive Capex, and the Value of Data Center Assets