What CXL Tells Us About the Next Shift in AI Data Centers: Shared Memory and Optical Interconnects

When people talk about AI infrastructure, the conversation usually centers on GPUs, TPUs, and HBM. But another important technology is now gaining attention: CXL (Compute Express Link).

In simple terms, CXL is a connection standard that allows memory to be placed outside of individual servers and shared across them.

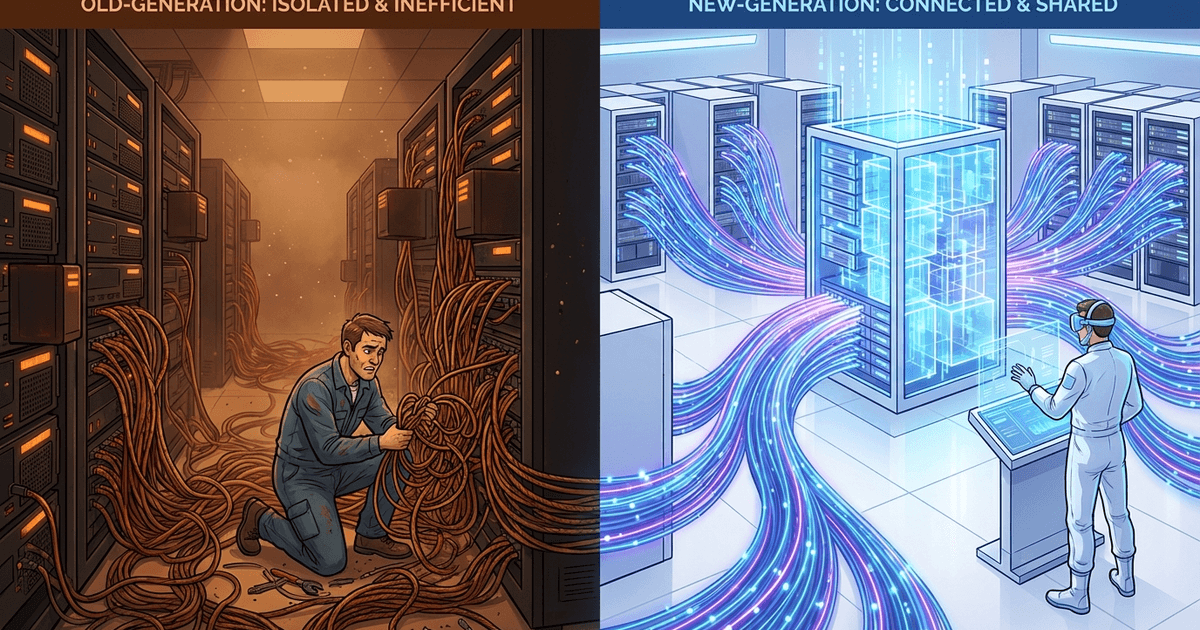

In traditional servers, memory is fixed to each server’s motherboard. Even if one server has spare memory, another server cannot use it.

CXL loosens this constraint. It allows processors to directly access memory devices located outside the server, enabling a framework where memory can be shared across an entire data center.

In other words, CXL moves us from “memory locked to each server” toward “memory available across the whole data center.”

According to The Information, CXL is not a new concept — it has existed as an open standard for several years.

Despite that, large-scale adoption had not happened because CXL requires coordination across CPUs, GPUs, memory, controllers, and software. The whole industry needs to move together for it to work. The Information reports that this coordination difficulty is exactly what delayed commercialization.

But the situation is changing.

The AI boom has driven memory shortages and price increases. Google has started using CXL in its own data centers, according to The Information. And NVIDIA’s upcoming Vera CPU will support Gen6/CXL 3.1, as stated in NVIDIA’s own technical blog. CXL is moving from “an interesting standard” toward “a technology companies need to adopt.”

Here is my view.

I think CXL is not simply about “adding more memory.” It introduces the concepts of sharing and tiering into AI memory architecture, which has been dominated by HBM. On top of that, optical interconnects are starting to address the physical limits of copper wiring. What matters is that these changes are happening at the same time.

If this trend continues, data centers could become much leaner. The constraints that have weighed heavily — memory placement, wiring, power, and cooling — may gradually loosen, allowing more efficient overall operation.

Below, I separate facts from my own analysis.

1. What Is Actually Happening

1-1. CXL Makes It Possible to Share Memory Across Servers

According to The Information, in traditional servers, memory is fixed to each server’s slots. Even if a neighboring server has spare memory, you cannot borrow it. CXL loosens this constraint by allowing processors to access memory located elsewhere in the data center. Google is reportedly already placing CXL controllers between CPUs and external memory pools.

The CXL standard itself has also evolved. ABI Research’s CXL 3.1 overview explains how CXL 2.0 introduced switching and memory pooling, while CXL 3.0/3.1 extended these with advanced switching and memory sharing between accelerators. CXL 3.1 is based on PCIe 6.1 and supports bidirectional transfers up to 128Gbps.

1-2. NVIDIA Will Officially Support CXL 3.1 with Vera

In NVIDIA’s technical blog, the comparison table between Grace and Vera explicitly lists “PCIe/CXL Gen6/CXL 3.1.” Vera uses up to 1.5TB of LPDDR5X memory on the CPU side, tightly connected to HBM4 on the Rubin GPU side through NVLink-C2C. In short, the design aims to use large-capacity CPU memory and ultra-fast GPU memory as closely together as possible.

NVLink is NVIDIA’s proprietary high-speed interconnect for connecting GPUs to each other, or CPUs to GPUs, with higher bandwidth and lower latency than PCIe. The “C2C” in NVLink-C2C stands for chip-to-chip.

NVIDIA is beginning to design the entire AI factory around not just HBM, but a broader external memory hierarchy.

A standard alone does not drive adoption. It becomes realistic only when major ecosystem players officially support it on the CPU side. Vera’s arrival positions CXL for a large-scale field test.

1-3. Google Already Has Operational Experience with Optical Interconnects

Google is ahead not only on CXL itself, but also on the physical layer beyond it. According to Google Cloud’s official blog, the company has integrated OCS (Optical Circuit Switching) and WDM (Wavelength Division Multiplexing) into its Jupiter data center network for over eight years. OCS switches optical connections, while WDM sends multiple optical signals through a single fiber to increase capacity. Google says this has enabled performance improvements, lower latency, lower cost, reduced power consumption, and zero-downtime upgrades.

The same approach is used on the TPU side. Google Cloud describes TPU v4 as connecting 4,096 chips using its own optical switch technology. Ironwood also uses an optical network that can reconfigure connections as needed. In other words, Google is already running optical technology at scale — not just for data center networking, but for chip-to-chip connectivity in AI compute.

1-4. NVIDIA Is Investing in Optical Components

Reuters reports that NVIDIA is investing $2 billion each in Lumentum and Coherent. The article indicates that NVIDIA is pursuing photonics to accelerate AI processors and secure access to advanced laser and optical networking products.

I think this is not a peripheral investment. It suggests that NVIDIA sees next-generation AI data centers as unviable without optical technology. Google leads with operational know-how, and NVIDIA follows with supply chain investment. That pattern is becoming visible.

2. My View: The Point Is Not CXL Alone, but Three Changes Happening Together

From here, this is my opinion.

I think we should not look at this simply as “whether CXL will catch on.” What matters is that three things are moving at the same time:

- The memory architecture is shifting away from HBM-only designs

- Copper wiring and electrical signals are hitting physical limits, pushing a transition toward optical interconnects

- Memory that was locked to individual servers is becoming shareable

When these three converge, the assumptions behind data center design start to change.

2-1. HBM Will Not Disappear, but “Doing Everything with HBM” Will Change

First, CXL is not a technology that replaces HBM. Ultra-fast memory close to the GPU will continue to be central to AI compute.

Looking at NVIDIA’s Rubin / Vera architecture, this has not changed. HBM4 sits on the GPU side, and large-capacity LPDDR5X sits on the CPU side. What is happening is not “HBM versus something else” — it is the addition of a larger memory tier outside the ultra-fast layer near the chip.

In AI infrastructure, the dominant approach has been to place the fastest possible memory as close to the chip as possible. That remains valuable.

But considering price, capacity, supply, and packaging constraints, “doing everything with expensive near-chip memory” has limits. The Information also notes that rising memory prices are driving companies toward CXL.

To me, it looks like a practical division of labor is emerging: HBM on the front line, CXL memory as rear support.

2-2. The Limits of Copper and Electrical Signals Are Pushing the Move to Optical

However, expanding CXL alone does not solve everything. The next wall is the transmission itself.

Electrical signals become harder to manage over distance. At higher speeds, signal degradation, power consumption, heat, and cooling become increasingly serious.

CXL 3.1, based on PCIe 6.1, extends bandwidth. But when you try to pool memory at scale — including memory that is physically far away — the burden on the physical layer actually increases. Longer distances mean harder wiring, more power, more heat, and more difficult signal management.

In short, even with CXL providing the shared memory framework, the wiring and signal transmission behind it become the bottleneck. This is why Google uses OCS and WDM in Jupiter (its large-scale internal data center network) — to relieve that bottleneck with optics.

Optical interconnects play an effective role here.

Google has already built operational experience with optical networks connecting TPUs and within Jupiter. NVIDIA, meanwhile, is moving in the same direction through large investments in optical component companies like Lumentum and Coherent.

The trend toward expanding memory sharing through CXL and relieving physical constraints through optical interconnects appears to be converging.

2-3. Beyond That, the Design of Data Centers Themselves May Change

Google positions OCS not merely as a technology for fast connections, but as an optical network that can reconfigure how things are connected based on demand.

In both Jupiter and TPU clusters, what matters is not just “being connected,” but “being able to change connections as needed.”

If CXL-based shared memory and optically reconfigurable connections mature, future data centers may be optimized not by “how many memory modules are in this server,” but by “how dynamically the entire building can reassign compute and memory resources.”

I find this significant. It is not that CXL alone is remarkable. What matters is the possibility of moving memory ownership from fixed to shared, connections from copper to optical, and the design unit from individual servers to the data center as a whole.

If this direction continues, data centers could become considerably leaner. The physical constraints that have held them back — memory placement, wiring, power, cooling — may gradually ease, changing how racks are organized and how resources are used. The result could be data centers that operate more efficiently and more cost-effectively overall.

3. Concerns

I have been fairly positive so far, but there are counterarguments and concerns worth noting.

3-1. Google Itself Has Published a Case Against CXL Memory Pooling

It is important to note that Google Research published a paper titled “A Case Against CXL Memory Pooling.” It argues that CXL memory pooling has three problems: cost, complexity, and limited utility.

The key point is that CXL has significantly higher latency than main memory, requires new cabling and switch infrastructure parallel to Ethernet, and the cost of that infrastructure may cancel out any savings from reduced RAM.

This is a serious counterargument. It means Google is experimenting with CXL in production while its own research arm still considers it far from a universal solution. This is one of the bigger concerns.

3-2. Industry-Wide Coordination Remains Difficult

The Information also explains that a major obstacle for CXL is that CPUs, GPUs, memory, and software all need to speak the same language.

Every time the standard evolves, processor redesigns, controllers, switches, memory modules, and server compatibility testing are all affected in a chain reaction.

Technologies like this do not spread on ideals alone. “Google and NVIDIA might be able to do it, but the broader industry will take time” — that concern seems reasonable to me.

3-3. Optical Interconnects Are Not a Universal Solution Either

Google emphasizes the benefits of OCS, but those benefits are also the result of years of custom design, in-house operations, and SDN integration.

Other companies cannot easily replicate the same setup. The fact that OCP is working on OCS interoperability means that standardization and ecosystem building are still in progress.

Optical components also face high barriers in manufacturing difficulty, integration, maintenance, and reliability testing. NVIDIA’s large investments in Coherent and Lumentum reflect the fact that this technology still takes time to reach widespread adoption — one could say they are buying time with money.

4. Summary

I think the significance of CXL goes beyond simply adding more memory. What seems more important is that the idea of sharing memory across servers is becoming practical, and this is converging with a move toward optical interconnects to address the physical limits of electrical wiring.

If this trend continues, data centers could become considerably leaner.

The physical constraints that have been holding them back — memory placement, wiring, power, cooling — may gradually ease, changing how racks are organized and resources are allocated. The result could be more efficient and more cost-effective data center operations overall.

We are moving from a time when watching HBM alone was sufficient, to a time when understanding memory tiering, sharing, and the optical physical layer is necessary to see the full picture.

Related Posts

From Cluster Strategy to Vertical Integration — SoftBank's Push to Build a Chip-Power-DC Unified AI Empire

How I See Meta's AI Strategy as an Investor — Business Realignment, Massive Capex, and the Value of Data Center Assets