NVIDIA's Trillion-Dollar Compute Vision: How Inference Chip Integration Reveals an All-Encompassing AI Ecosystem

At GTC 2026, Jensen Huang’s roadmap was not just about next-generation chip performance. It was a strategic move to combine NVIDIA’s dominance in training with external inference technology and agent deployment tools, pulling every part of the AI process into NVIDIA’s own economic sphere.

While the world focused on new chips like Blackwell and Vera Rubin, the more significant change was this: NVIDIA has started absorbing technologies that were previously considered rivals, rewriting the AI stack from end to end under its own standard.

Behind the ambitious $1 trillion revenue target lies NVIDIA’s clear intent to control the full compute pipeline — from training to deployment.

Reuters reported that NVIDIA projects over $1 trillion in sales opportunity from Blackwell and Rubin by 2027, and that GTC this year placed particular emphasis on inference products.

What We Can Confirm

The key facts from this announcement and the surrounding market developments are as follows.

$1 Trillion Cumulative Revenue Forecast

NVIDIA indicated that sales opportunities from Blackwell and Rubin products would exceed $1 trillion over the three years from 2025 to 2027. Reuters noted this was a significant increase from last year’s $500 billion projection, and that the figure still excludes Groq-related revenue and legacy chip sales to China.

Groq Integration and a Full Push into Inference

Reuters reported that NVIDIA used GTC to showcase inference systems built on Groq technology, positioning inference as a clear growth axis. NVIDIA’s official announcement listed Groq 3 LPX inference accelerator racks as a component of the Vera Rubin platform.

Samsung for Supply Chain Diversification

Reuters reported that Samsung Electronics will manufacture NVIDIA’s new inference chip using a 4-nanometer process. This signals NVIDIA’s effort to expand production capacity for inference components beyond sole reliance on TSMC.

Intel’s Involvement Signals a Transitional Design

Some reports indicate that Intel processors are used for communication management within the new Groq-integrated rack configuration. This suggests that as NVIDIA rapidly absorbs inference-specialized chips into its ecosystem, it is not yet a fully self-contained design — it is pulling in external components where needed to move quickly.

Cerebras: A Competing Architecture Still in Play

Bloomberg reported that Cerebras has tapped Morgan Stanley and may return to an IPO as early as April. This indicates that the AI semiconductor market — including inference and specialized workloads — has not yet consolidated into a single dominant architecture, and that capital markets still see opportunity in alternative approaches.

NemoClaw: An Agent Development Framework

Reports indicate that NVIDIA announced NemoClaw, a framework for building safer, enterprise-grade AI agents. This is not just about providing models — it is a move to control the execution layer where AI agents actually operate inside organizations.

Layer-by-Layer Analysis

NVIDIA is working to enclose its ecosystem across every layer of AI infrastructure.

Processor Layer: Training + Inference Convergence

While maintaining its dominant position in GPU-based training, NVIDIA has integrated Groq’s inference technology. This prevents customers from migrating to competitors like Cerebras, which sell on inference speed and efficiency, by enabling both training and inference to be completed within NVIDIA’s own rack systems.

Manufacturing Layer: Samsung and Reduced TSMC Dependency

By using Samsung’s 4-nanometer manufacturing, NVIDIA is broadening its inference chip supply beyond sole dependence on TSMC. In my view, this is not just about cost — it is a manufacturing encirclement strategy that accounts for geopolitical risk and supply constraints. As the inference market grows, diversifying the supply base itself carries significant strategic value.

Implementation Layer: Intel Usage Shows Pragmatism

Reports of Intel processors being used for communication management in the Groq-integrated rack point to something important. Rather than insisting on a fully proprietary stack, NVIDIA is prioritizing speed to market in inference, incorporating even Intel’s components where necessary. I think this is where NVIDIA’s real strength lies. It does not just confront competitors head-on — it absorbs the pieces it needs into its own order.

Software Layer: Agent Execution Standards

With NemoClaw, NVIDIA is claiming the standard for how AI performs work — the interface through which agents operate. By providing a safe execution environment, NVIDIA can channel the massive inference compute that runs behind these agents back into its own infrastructure.

Capital Layer: Self-Reinforcing Demand

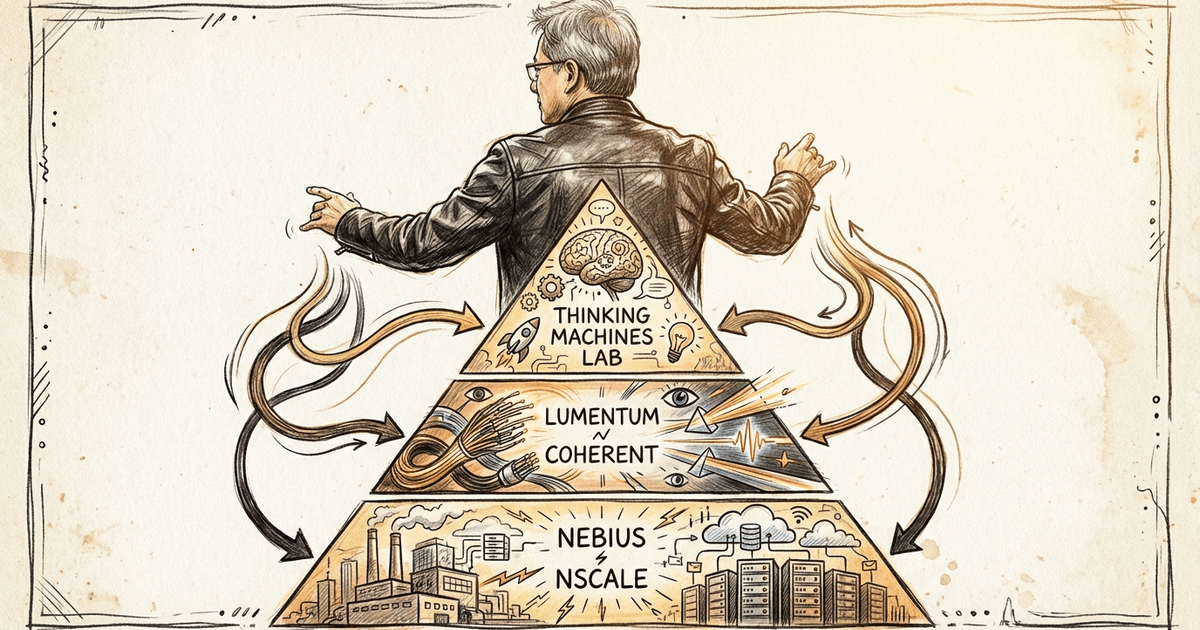

NVIDIA invested $2 billion in Nebius, which in turn signed an AI capacity deal with Meta worth up to $27 billion. This means NVIDIA is not just selling chips — it is investing in the very entities that consume those chips, amplifying demand within its own ecosystem.

My Take

What this tells me is that NVIDIA is not content with being the dominant player in training alone. It is working to pull every element of AI — inference, agent tooling, manufacturing, capital — into a single orbit centered on itself.

With Cerebras approaching an IPO, the timing is notable. NVIDIA has absorbed Groq’s inference technology, expanded manufacturing through Samsung, pragmatically used Intel components where needed, and started providing agent execution infrastructure through NemoClaw. In effect, it has turned its rivals’ advantages into components of its own platform.

Even as AI models themselves become commoditized, NVIDIA is packaging the hardware to run them fast and safely, the software to control them, and the manufacturing and capital to sustain them. For any player involved in AI services, the result is that they may find themselves operating entirely within NVIDIA’s ecosystem without even realizing it.

This is not a strategy of eliminating competition. It is a strategy of neutralizing the value of competition by incorporating it into NVIDIA’s own system. I find this to be a remarkably sophisticated approach.

Alternative Perspectives

This bullish growth scenario does carry structural risks.

Integration Cost and Technical Debt

If Intel processors are indeed used for communication management in the Groq-integrated rack, that also indicates the integration is still in a transitional phase. Rushing into the inference market could saddle NVIDIA with complex integration debt down the road.

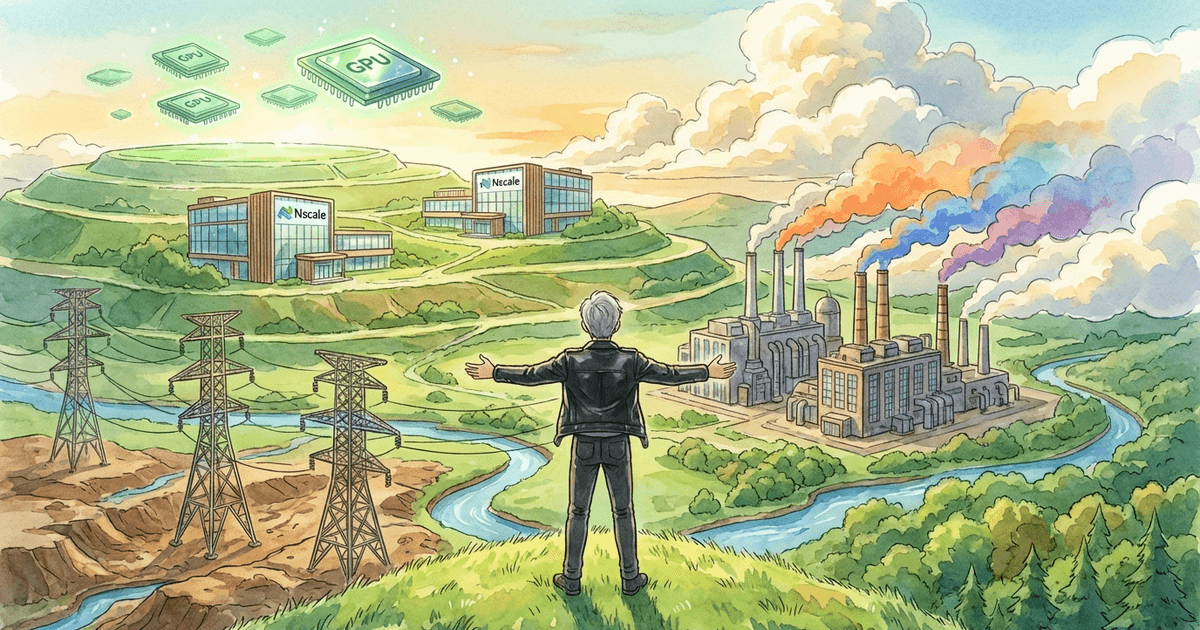

Physical Layer Bottlenecks

Supporting $1 trillion in infrastructure requires vast land and enormous power supply. Even as semiconductor supply advances through Groq integration and Samsung manufacturing, physical constraints — data center siting, grid connections, community acceptance — remain separate challenges.

Summary

What makes this significant is that the competition for AI leadership is no longer about individual chip performance.

The battleground now spans training, inference, agent deployment, manufacturing diversification, and capital circulation.

This GTC showed NVIDIA’s effort to pull all of these elements into a single, massive ecosystem — illustrated by the $1 trillion figure and the involvement of Groq, Samsung, and Intel.

Join the conversation on LinkedIn — share your thoughts and comments.

Discuss on LinkedInRelated Posts

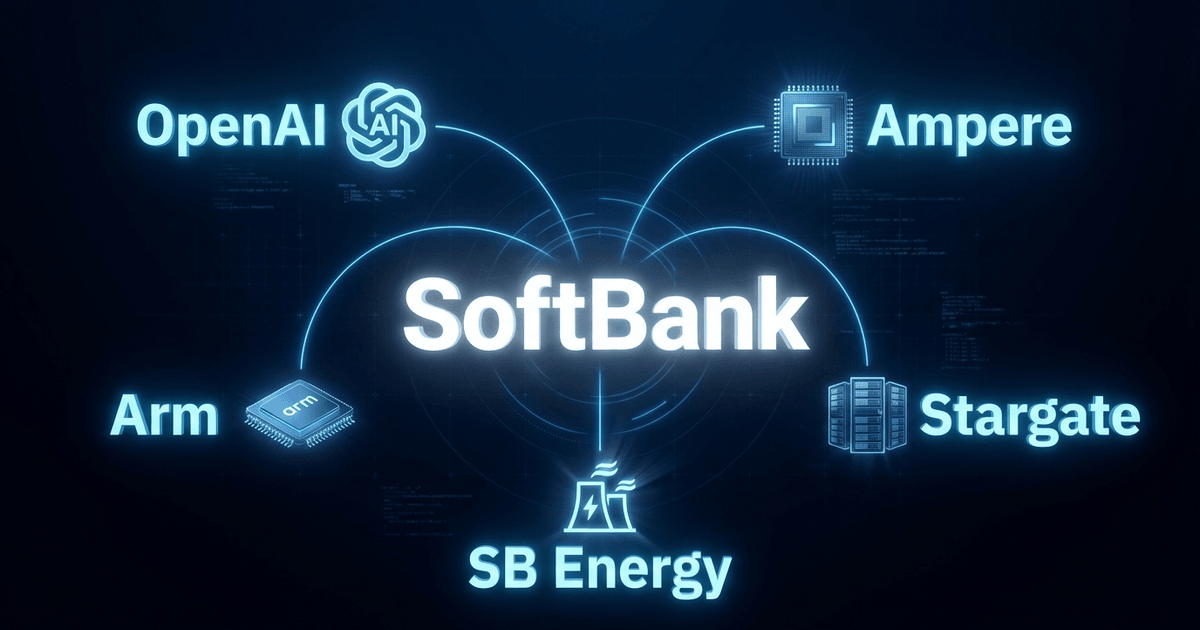

From Cluster Strategy to Vertical Integration — SoftBank's Push to Build a Chip-Power-DC Unified AI Empire

What It Means That Nvidia-Backed Nscale Is Pursuing an 8GW Data Center Site