Commanding the AI Infrastructure Balance: Meta's Strategic Vertical Integration

Big Tech’s AI infrastructure investments have reached staggering scales, but Meta’s strategy stands apart in its sophistication. Far from being a passive chip buyer, Meta strategically plays NVIDIA and AMD against each other, securing its own commanding position in the process.

PyTorch: The Nucleus That Neutralizes Hardware Lock-In

At the core of Meta’s strategy lies PyTorch — the deep learning framework it developed and nurtured into the industry’s de facto standard.

PyTorch is far more than a library. It functions as an abstraction layer that absorbs the differences between hardware platforms. Through PyTorch, Meta maintains the initiative to run workloads on either NVIDIA or AMD GPUs. Developers can freely operate GPUs through Python without worrying about the differences between CUDA and ROCm.

This architecture ensures that Meta never surrenders pricing power to any single chip vendor. It can always choose the most cost-effective compute resources on its own terms. This is, in essence, an engineering-driven defense against vendor lock-in.

Strategic Tiering for the Next Frontier

Meta has entered a partnership with NVIDIA for co-designing next-generation data centers.

Based on my assessment as an engineer, Meta appears to be segmenting the roles of NVIDIA and AMD at a level that goes beyond conventional LLM development.

Tier 1 (Cutting-Edge Multimodal): NVIDIA

Meta’s primary focus today is multimodal model development — the kind of visual and audio processing exemplified by the hit product “Meta Ray-Ban.” Google’s TPUs are optimized for LLM workloads and are less suited to this type of complex, general-purpose computation. This is precisely why Meta is co-designing at the data center level with NVIDIA — to counter Google’s vertically integrated approach with the highest-performance, most versatile hardware available.

Tier 2 (Commodity Workloads): AMD

Meanwhile, everyday recommendation engines, on-device inference for smartphones, and the vast base layer of compute don’t require NVIDIA’s premium silicon. Preventing NVIDIA from monopolizing this tier is the key to protecting Meta’s cost structure.

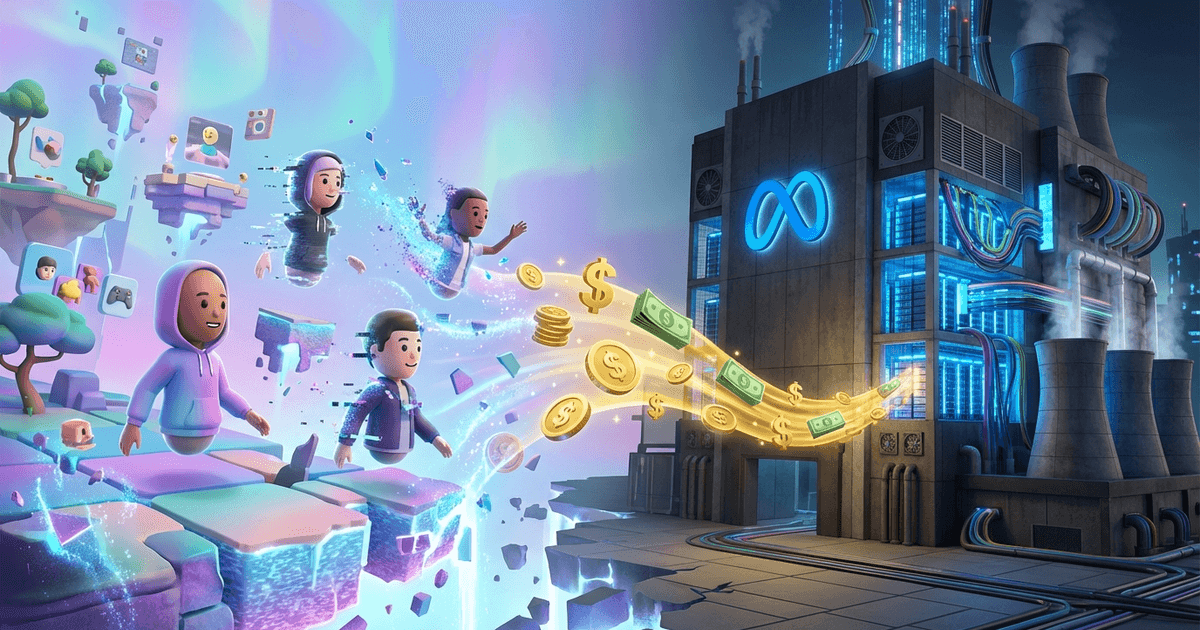

Financial Symbiosis with AMD: Turning Risk into Profit

AMD underpins this Tier 2 strategy. Meta has not only secured a massive 6-gigawatt (6GW) compute capacity from AMD, but also obtained warrants to acquire approximately 10% of AMD’s stock at near-zero cost.

“Buy chips in volume, cultivate AMD into a formidable competitor, and offset hardware costs through AMD’s stock appreciation.” This financial scheme exemplifies the sophisticated fusion of engineering and corporate strategy that defines Meta’s approach.

Conclusion: Controlling the Gatekeepers of Physics and Intelligence

Rather than pursuing Google’s path of complete in-house development, Meta harnesses the power of existing industry giants while layering PyTorch — a universal language — on top. Through this approach, Meta is positioning itself to control the entire infrastructure stack.

Meta is completing an exceptionally rational and sophisticated form of vertical integration: commanding the physical platforms of the multimodal era on its own terms.

Join the conversation on LinkedIn — share your thoughts and comments.

Discuss on LinkedInRelated Posts

The Era of White-Collar Work Redesign, as Shown by AWS and Meta — How Should We Work and Invest in the Structural Shift from Labor Costs to AI?

How I See Meta's AI Strategy as an Investor — Business Realignment, Massive Capex, and the Value of Data Center Assets