The Existential Crisis of AI Economics — OpenAI's Margin Squeeze and the Inference Chip Wars

According to a recent report from The Information, OpenAI’s gross margin dropped from 40% to 33% in 2024 — far below its own forecast of 46%. For a subscription-based AI service at this scale, this is a notably thin margin.

The culprit is the rapid growth of inference costs. Last year, OpenAI paid cloud providers **6.6 billion initially projected. By 2030, this figure is expected to reach $85 billion per year.

This structure of bleeding money on every token is the single biggest barrier to profitability. The battle for AI supremacy is shifting from “who can build the smartest model” (training) to who can produce tokens cheapest and fastest (inference) — a competition fought at the level of silicon physics.

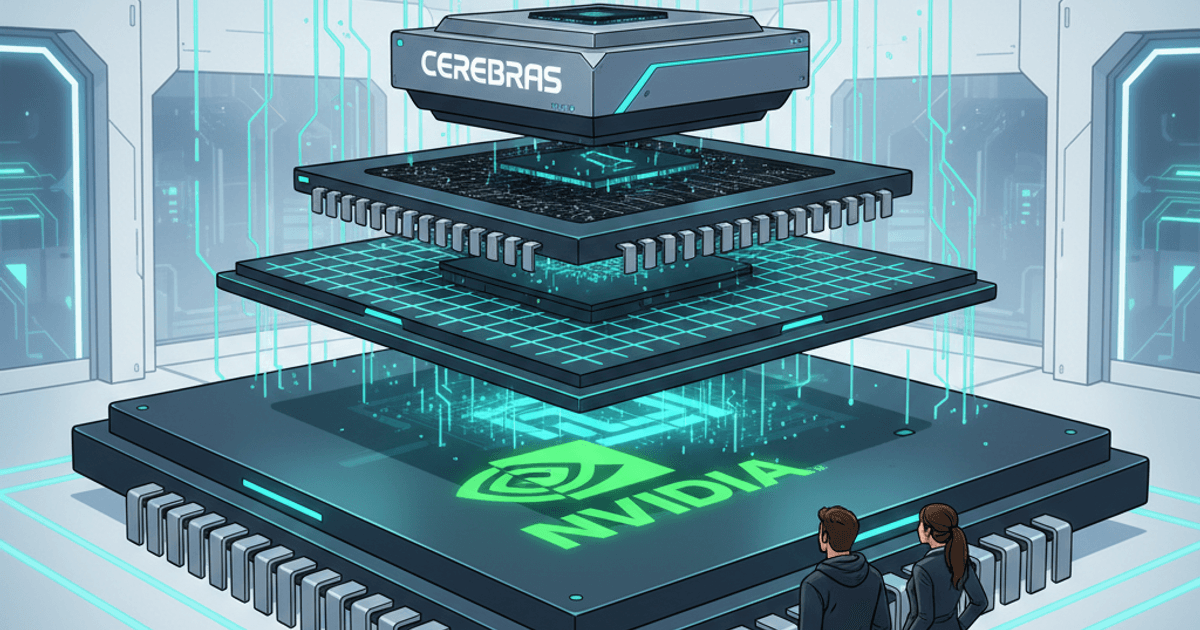

NVIDIA’s Training Throne and Its Inference Achilles’ Heel

In the training phase, NVIDIA’s GPUs reign supreme, leveraging High Bandwidth Memory (HBM) for unmatched versatility. But in the inference phase — generating tens of thousands of responses per second — the constant data shuffling between expensive memory and processors becomes a source of latency and power waste, a problem known as the Memory Wall.

If OpenAI continues to rely solely on NVIDIA GPUs for inference, its business model could become unsustainable. That is the essence of the current crisis.

The Challengers: SRAM’s Counterattack on Inference Costs

A new generation of chip startups, built on fundamentally different architectures, is now addressing this gap.

-

Cerebras: Their massive “wafer-scale” chip fits an entire model on SRAM (static memory) directly on the processor, eliminating data movement entirely. OpenAI’s reported $1 billion deal with Cerebras is a bet on this radical inference efficiency.

-

Groq: Their proprietary LPU (Language Processing Unit) maximizes sequential processing speed, making it ideal as the “nervous system” for real-time AI agents. Notably, NVIDIA signed a $20 billion deal in December 2025 to acquire key talent and license technology from Groq — effectively an acknowledgment by the king that its inference capabilities needed reinforcement.

-

Etched: Abandoning general-purpose flexibility, Etched has hardwired the Transformer algorithm directly into an ASIC, targeting over 20x the efficiency of GPUs. This is inference specialization taken to its logical extreme.

-

Tenstorrent: Led by legendary chip architect Jim Keller, this company pursues cost-performance through a flexible RISC-V-based architecture.

My Perspective: The “Two-Tier” Infrastructure

I believe AI infrastructure is heading toward a clear bifurcation:

-

Training & Advanced Inference (NVIDIA): The unwavering “wellspring of intelligence” that builds the foundation of models.

-

Everyday Inference (Cerebras / Groq / Etched / TPU): The “mass production factory” that drives the per-token cost to its absolute minimum.

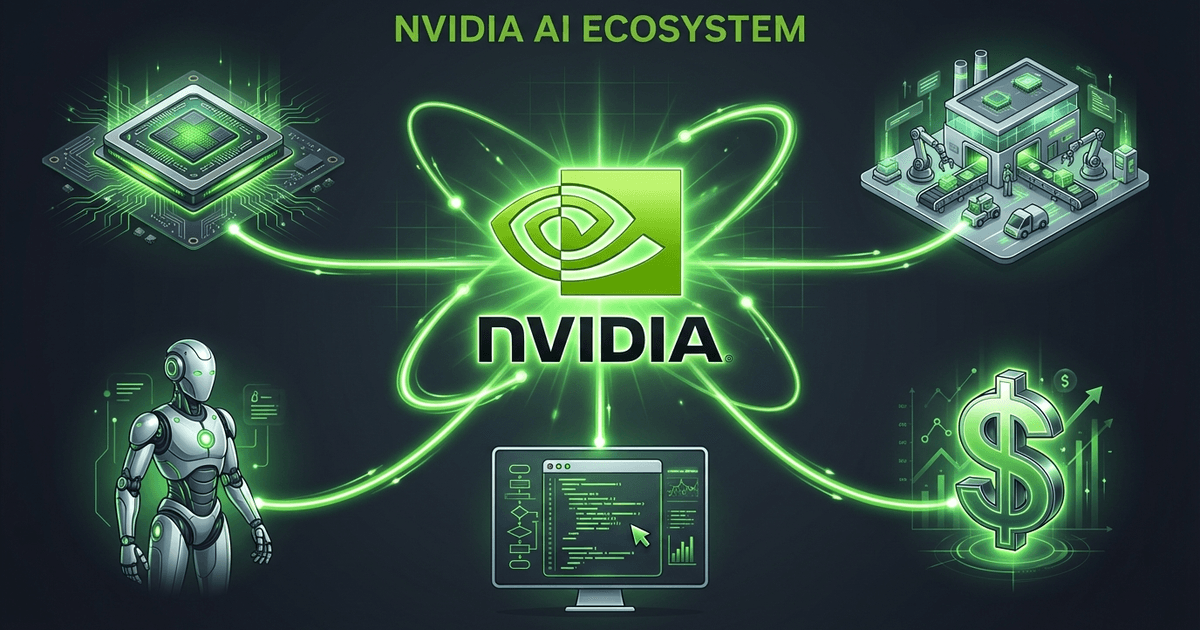

Just as Google has leveraged its proprietary TPUs to contain inference costs through vertical integration, OpenAI is now pivoting toward Cerebras and its own custom chips (co-developed with Broadcom).

Conclusion: The True Winners of the AI Economy

The key to profitability is no longer the IQ of a large language model. It is how efficiently intelligence can be etched into silicon — a battle fought in the domain of physics.

When the cost per token drops to fractions of a cent, AI will finally transcend being a “tool” and dissolve into our daily lives — through smart glasses and other wearables — becoming an extension of ourselves.

Related Posts

NVIDIA's Trillion-Dollar Compute Vision: How Inference Chip Integration Reveals an All-Encompassing AI Ecosystem

What It Means That Nvidia-Backed Nscale Is Pursuing an 8GW Data Center Site