AI Competition Shifts from Models to Power Grids: The New Bottleneck of the Data Center Era

Over the past year or two, AI competition has been largely framed around “which model is best” and “how many GPUs can you secure.” These remain important questions.

However, what has become increasingly clear in 2026 is that the competitive focus has moved one layer deeper — to the infrastructure that makes data centers (DCs) viable in the first place.

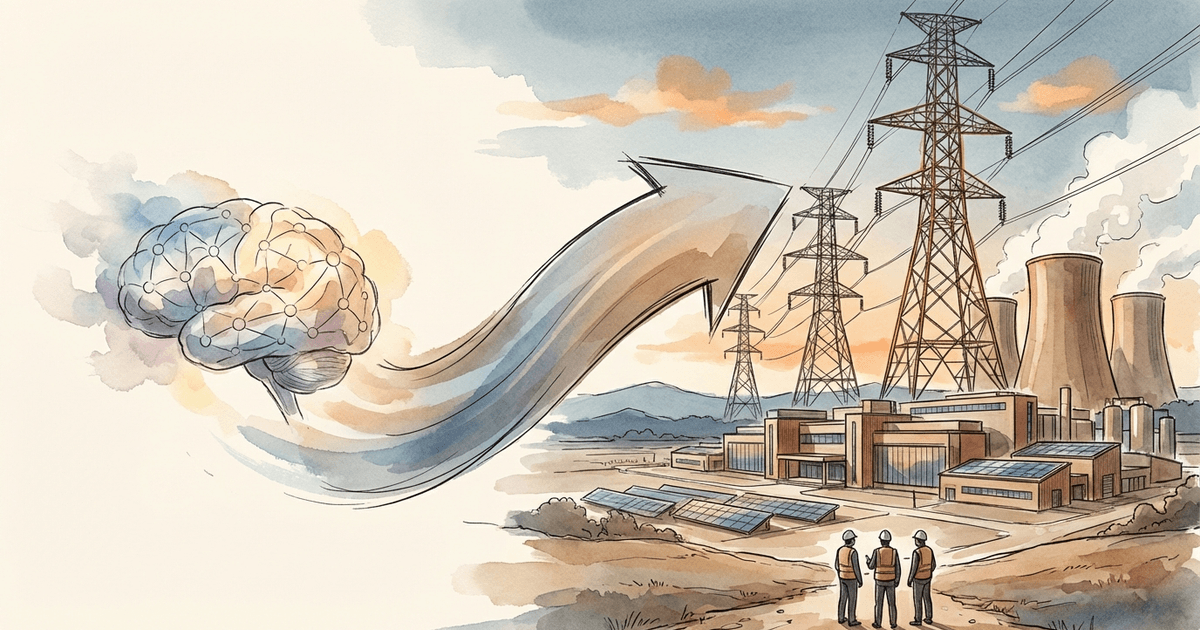

AI competition is now hitting constraints in power (generation + grid), land, and cooling (the water-energy tradeoff) before it hits constraints in model capability. When these bottlenecks bind, even the best model cannot scale. AI has entered the stage of industrial infrastructure.

After GPUs, the Next Shortage Is the Power Grid

AI data centers consume enormous amounts of electricity, and their expansion pace is rapid. As a result, issues like “not enough power,” “rising electricity rates,” and “community pushback” have moved beyond the tech industry’s internal concerns to become political flashpoints.

A telling example: Trump stated that he had told big tech companies to build their own power plants for data centers, as reported by Reuters.

On the same day, the White House was reported to be hosting big tech for a Ratepayer Protection Pledge aimed at curbing power costs.

By March, major tech companies had signed a pledge not to increase the burden on electricity ratepayers, according to Reuters. There may be political elements tied to the midterm elections, but the fact that this has become a political issue is itself significant.

The key takeaway is that AI scaling is now constrained by securing power and community consent before it is constrained by model quality. AI competition is becoming a game of regulation, local politics, and infrastructure planning — not just capital allocation.

The Corporate Response to Power Shortages: Long-Duration Storage as Strategy

When the grid is congested, simply adding generation capacity is not enough. In regions with high renewable penetration, storage that smooths output variability becomes critical to DC uptime. Iron-air batteries are emerging as a notable solution here.

Form Energy officially describes its technology as targeting up to 100 hours of discharge (multi-day).

TechCrunch also reported that Google’s new 1.9GW clean energy deal includes a massive 100-hour battery, highlighting how long-duration storage is becoming integral to DC power strategy.

The message is straightforward. The outcome of “AI competition” is not determined by GPU procurement alone — it increasingly depends on how companies design for power smoothing, resilience, and operating costs. Long-duration storage is no longer just a utility concern; it has entered the core of DC competition.

Cooling: Not Just Water Scarcity, but the Energy Cost of Cooling Water

A frequently overlooked bottleneck is cooling. DC cooling is often discussed in terms of “not enough water,” but the real issue is broader — it is a tradeoff between water and energy.

Depending on the cooling method, operators face choices like “reduce water use but increase power consumption” or “keep power low but require more water.” The optimal solution varies by local conditions: climate, water availability, and electricity pricing. In other words, cooling is less about equipment cost and more about location, operations, and regulation.

Case Study: NVIDIA Rubin’s Direction Toward Reducing Chiller Dependency

Industry reports and coverage highlight that Rubin is designed around single-phase direct liquid cooling with 45C warm water as the supply temperature, potentially eliminating the need for chillers (large refrigeration units that produce cold water).

The key points are:

- Rubin uses liquid cooling, so a coolant (typically water-based) is still required

- However, the design direction reduces the electrical load needed to produce cold water (chiller load)

- As a result, depending on region and design, water usage and cooling costs can be compressed

Rubin’s cooling architecture reflects the fact that cooling — as a function of both water and energy — has become a binding constraint for DC viability. The chip side is now designing for thermal management and operating costs, which is a concrete example of cooling becoming a bottleneck.

The Extreme Solution: SpaceX’s Space Data Center Concept

If power grids, land, water, and permitting on the ground are all congested, the idea of “doing it in space” naturally emerges. Reuters reported that SpaceX has filed with the FCC for a concept involving solar-powered satellite data centers.

This remains firmly in the realm of concept, regulatory filing, and future proposal. The barriers — launch costs, operational complexity, space debris, security, and economics — are substantial.

In my view, this is better understood not as a near-term solution but as a symbol of how severe terrestrial infrastructure constraints have become.

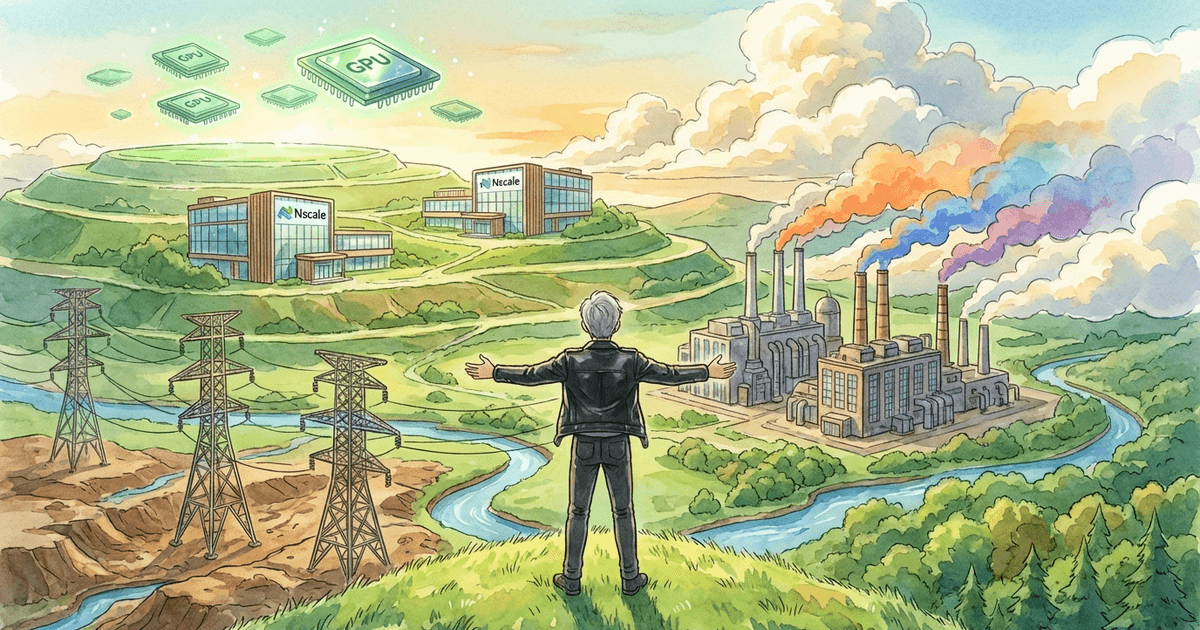

Chip Companies Are Becoming DC Investors

The capital shift toward infrastructure is not limited to tech companies. Upstream players in the supply chain — semiconductor companies themselves — are investing in the DC layer.

Reuters reported that NVIDIA invested $2 billion in CoreWeave, backing expansion that includes land and power procurement for data centers.

This is symbolically important: a company that sells chips is now investing in the infrastructure to run them. Even the most powerful GPU cannot grow the market if there are not enough DCs to deploy it. This is why capital flows toward the conditions that make DCs viable: power, land, cooling, and connectivity.

Counterarguments: Where This Thesis Could Be Wrong

Counterargument 1: Community opposition could politically stall DC construction

DCs generate tax revenue and jobs, but they also face pushback over rising electricity rates, grid strain, water use, noise, and visual impact. If this becomes a major political issue, permitting delays and grid upgrades could slow the overall pace of AI investment. The ratepayer protection pledge discussed above is consistent with this concern.

Counterargument 2: “Build your own power plant” is easier said than done

Power plants face constraints in siting, fuel, regulation, and equipment procurement. The timeline from planning to operation is long. Even with pledges and political pressure, short-term power shortages and grid constraints may not resolve quickly.

Counterargument 3: Space DCs may partly be an investor narrative

SpaceX’s filing shows an ambitious direction, but the practical barriers are significant. The possibility that this contains elements of “story-building” for future fundraising cannot be ruled out. It is prudent to position this as a symbol of terrestrial constraints rather than a near-term solution.

Conclusion: AI Has Become Industrial Infrastructure

Summarizing the analysis, the bottleneck of AI competition has shifted as follows:

- Model superiority: Important, but performance gaps tend to narrow

- GPU procurement: Important, but supply chains are expanding

- Constraints that make DCs viable (power grid, cooling, land, permitting): This is becoming the ultimate bottleneck

The next winners will not be determined solely by building smarter models. The companies that can operate AI at scale — including power and cooling — will be the ones that ultimately prevail. In other words, the advantage goes to those with the capital to vertically integrate these capabilities.

100-hour storage, cooling designs that reduce chiller dependency, the politicization of power costs, and even the extreme idea of space data centers — all of these point to the same shift: AI equals infrastructure.

Following models alone is no longer sufficient. Tracking power, cooling, and grid constraints — the less visible bottlenecks — has become essential to understanding the AI era.

Join the conversation on LinkedIn — share your thoughts and comments.

Discuss on LinkedInRelated Posts

How I See Meta's AI Strategy as an Investor — Business Realignment, Massive Capex, and the Value of Data Center Assets

What It Means That Nvidia-Backed Nscale Is Pursuing an 8GW Data Center Site